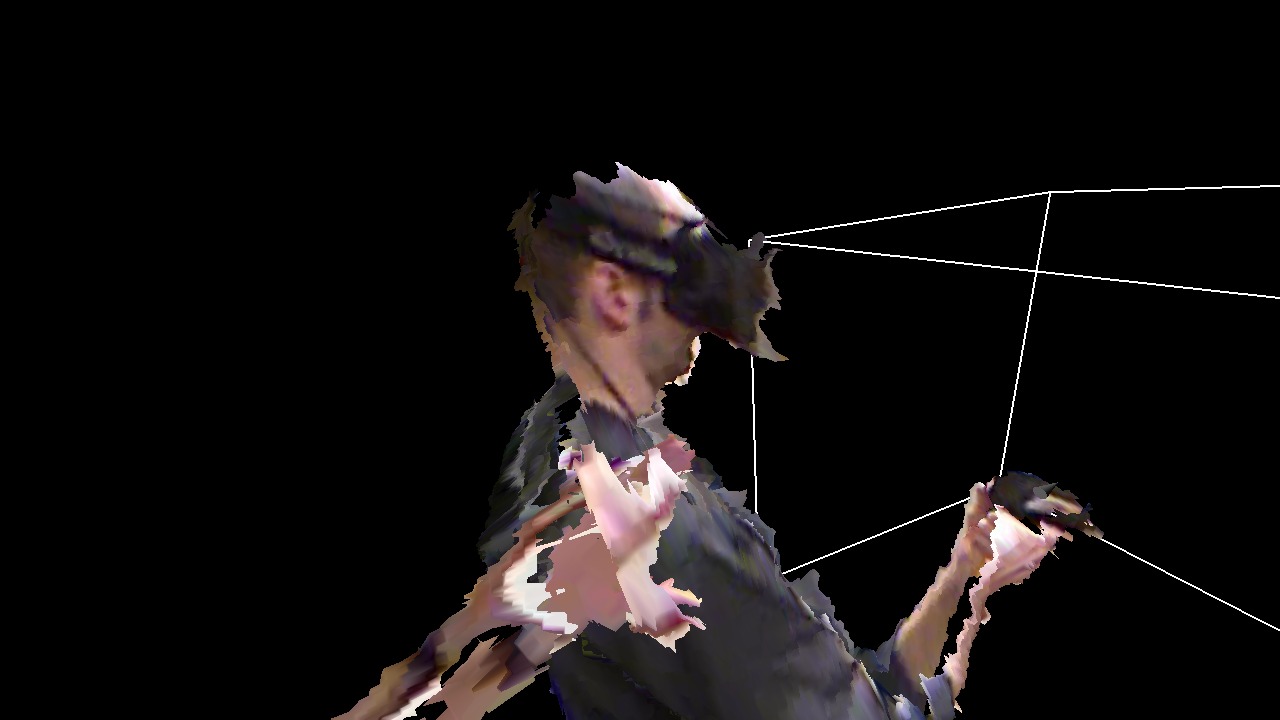

We had a couple of visitors from Intel this morning, who wanted to see how we use the CAVE to visualize and analyze Big Datatm. But I also wanted to show them some aspects of our 3D video / remote collaboration / tele-presence work, and since I had just recently implemented a new multi-camera calibration procedure for depth cameras (more on that in a future post), and the alignment between the three Kinects in the IDAV VR lab’s capture space is now better than it has ever been (including my previous 3D Video Capture With Three Kinects video), I figured I’d try something I hadn”t done before, namely remotely interacting with myself (see Figure 1).

Only in this case the interaction is not remote in a spatial sense (obviously!), but in a temporal sense. I did this in two stages:

In the first stage, I recorded myself using the Nanotech Construction Kit to construct a C-60 Buckminsterfullerene, using the 3D video capture space in IDAV’s VR lab, which consists of an Oculus Rift DK1 with positional head tracking provided by an InterSense IS-900 tracking system, a hand-held input device tracked by same, and three first-generation Kinect cameras set up in a rough equilateral triangle around a center point (each Kinect is approximately 2m away from the center point so that tall users like myself can be captured from head to toe). Vrui‘s built-in recording facility completely captures a user’s interactions with a VR application so that a captured session can be played back at a later time exactly as it happened (with some caveats for randomized or asynchronous applications). At the same time, the KinectViewer vislet recorded the incoming 3D video streams from the three Kinects into three pairs of (depth, color) files in parallel to showing them to me in real time to provide a VR “avatar.”

In the second stage, I played back the recording made in stage 1, and re-routed head tracking and input device data such that the head-mounted display worked as usual, meaning that I was free to walk around the capture space and observe the pre-recorded activity. The KinectViewer vislet was running double duty: it was playing back the three 3D video streams recorded in stage 1, while at the same time capturing live streams from the three Kinects, rendering them in real time, and saving them to a second set of 3D video files. At the same time, I also recorded my interactions with the pre-recorded session using Vrui’s recording facility a second time.

Because Vrui’s recording facility does not record the output generated from running a Vrui application (analogous to a WAV sound file), but the input that drove the Vrui application (analogous to a MIDI sound file), I was able to interact in real time with the pre-recorded session. Of course, having done so would have thrown off my pre-recorded self, as his interactions would no longer have matched the state of the program during playback, which is why I held off doing that until the end, when my old self was done doing his interactions.

Finally, to create the video embedded below, I ran the Nanotech Construction Kit yet again, this time using the recording data stream produced during stage 2, and all six 3D video streams recorded in stages 1 and 2, rerouted to a normal monoscopic 1280×720 display window for movie encoding and upload to YouTube. Finally finally, because I didn’t have a microphone at hand when I recorded stage 2, I recorded a narration audio track, removed horrible microphone noise via audacity, and spliced it into the video stream. Here’s the final final result:

Now I really need to get going on a proper tele-collaboration video…

High five! Cool stuff

It seems so compelling to be able to see yourself in VR, especially in applications like this. Perhaps it makes less sense in a game with a different setting, then skeleton tracking could make more sense, but I’m still kind of excited about proper 3D recording. Ordinary stereo video feels awfully limited in comparison…

I tend to agree. In most games, your fuzzy scrawny holo-avatar would probably look out of place (think Gears of War). But until skeletal tracking becomes good and low-latency enough for self-representation, there might be a stop-gap application where your own body is presented only to yourself, to improve presence and reduce some aspects of simulator sickness, while a traditional avatar is presented to everyone else (in a multiplayer setting).

For scientific applications, and especially tele-collaboration, on the other hand, nothing I’ve seen comes even close to holo-avatars.

(just subscribing to the comments)

I wait anxiously to the “future post” and see how you joined 3 Kinects the Rift.

Pingback: Brekel Oculus Rift Support for Microsoft Kinect V2 | Microsoft Home