“It can’t be comfortable or healthy to stare at a screen a few inches in front of your eyes.”

The popularity of Google Cardboard, and the upcoming commercial releases of the Oculus Rift, HTC Vive, and other modern head-mounted displays (HMDs) have raised interest in virtual reality and VR devices in parts of the population who have never been exposed to, or had reason to care about, VR before. Together with the fact that VR, as a medium, is fundamentally different from other media with which it often gets lumped in, such as 3D cinema or 3D TV, this leads to a number of common misunderstandings and frequently-asked questions. Therefore, I am planning to write a series of articles addressing these questions one at a time.

First up: How is it possible to see anything on a screen that is only a few inches in front of one’s face?

Short answer: In HMDs, there are lenses between the screens (or screen halves) and the viewer’s eyes to solve exactly this problem. These lenses project the screens out to a distance where they can be viewed comfortably (for example, in the Oculus Rift CV1, the screens are rumored to be projected to a distance of two meters). This also means that, if you need glasses or contact lenses to clearly see objects several meters away, you will need to wear your glasses or lenses in VR.

Now for the long answer.

As everybody knows, it is very hard or impossible to focus on things that are very close to one’s eyes. Pick up a piece of paper with some text on it, hold it one inch in front of one of your eyes, and try to read it. Unless you are severely near-sighted, it won’t work (that, by the way, is what near-sighted means: being able to focus on things that are very close). To understand why it doesn’t work, we need to understand how the eye focuses on objects at different distances. Figure 1 shows a (simplified) eye, looking at an object at three different distances.

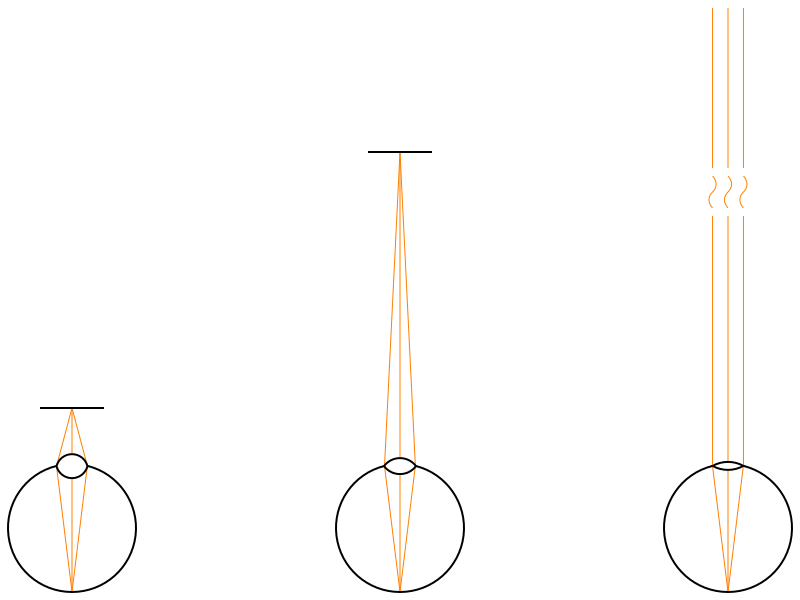

Figure 1: An eye focuses on objects at three different distances (near, medium, infinite). Depending on an object’s distance, light rays from that object diverge at different angles when reaching the eye. The eye counters that by accommodating, i.e., changing the shape of the lens.

In each setup, some light rays emitted or reflected from a point on the object enter the eye through the pupil and the lens at the front, and hit the retina in the back, where the light is detected by light-sensitive cells (rods and cones). To create a sharp image, all light entering the eye from a single point on the object must be focused on a single point on the retina. That is the job of the lens: it bends the incoming divergent rays of light such that they converge on a point on the retina.

The central observation is that the amount by which light rays from an object diverge at the eye’s lens depends on the distance from the object to the eye. If the object is close, the rays diverge at a large angle (see Figure 1, left); if the object is at a medium distance, the rays diverge less (see Figure 1, center); if the object is infinitely far away, the rays are parallel and do not diverge at all. In other words: the closer an object is to the eye, the more the eye’s lens has to bend the incoming light rays to form a sharp image on the retina.

The human eye (and the eyes of all mammals, birds, and reptiles) achieves this by changing the shape of the lens, a process called accommodation. To focus on near-by objects, a ring of muscle around the lens (the ciliary muscle) contracts to make the lens thicker and rounder; to focus on far-away objects, the same muscle relaxes, and the lens flattens out, being pulled on by elastic ligaments connecting it to the rest of the eye.

But there is a limit to how much the ciliary muscle can compress the lens, and therefore there is a minimum distance on which the eye can focus. Any objects closer than that distance appear blurry. It is around 4 inches (10cm) for children and young adults with normal vision, and increases with age.

What this means is that, for most people, it would be impossible to focus on the screens of HMDs (unless the display is ridiculously large (source)). And even if it were possible, looking at very close objects for a long time can cause eye strain, as the ciliary muscles have to continuously squeeze the eyes’ lenses.

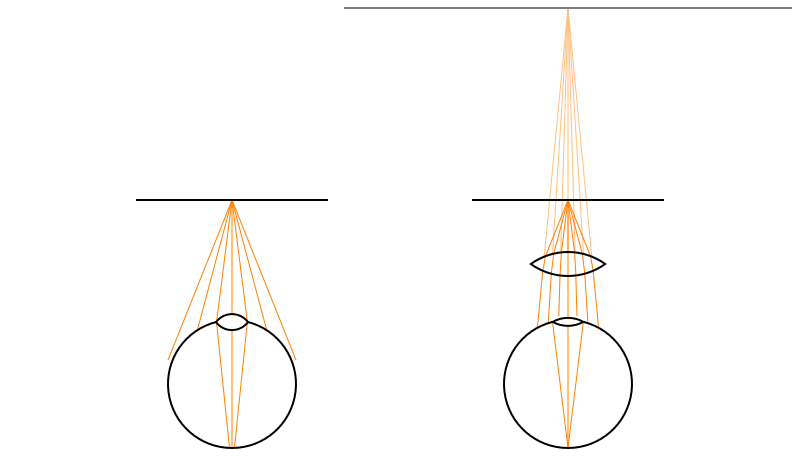

And that’s where the lenses in HMDs enter the picture. The left part of Figure 2 shows an eye looking at a very close object, in this case an HMD’s screen. The light rays from a point on the screen diverge so much that the eye’s lens can not converge them to a single point on the retina, even at maximum accommodation, meaning that the screen will appear blurry.

Figure 2: An eye failing to focus on a very close real screen, and being able to focus on the virtual image of the same real screen created by an interposed lens.

The right part of Figure 2 shows a screen at the same distance, but in this case, there is a lens between the screen and the eye. Just as the eye’s lens itself, this intermediate lens bends light rays, and reduces the divergence angle of light from the screen to a point where the eye can focus it, even at less accommodation.

What does this look like from the eye’s point of view? We established earlier that the amount of divergence of light rays at the eye is directly related to the distance to the object from which the light is emitted. If we extend the light rays between the intermediate lens and the eye backwards (the fainter lines on the right of Figure 2), we find that they all intersect in a single point behind the real screen. To the eye, it looks exactly like a screen that is farther away and larger (the grey horizontal line on the right of Figure 2). This is called a virtual image.

And that’s why viewers can comfortably focus on the screens in HMDs: they are not looking at the real screens, which are too close, but at virtual images of the real screens, which are at a much larger distance. How much larger depends on the model of HMD: in the Oculus Rift DK1, for example, the virtual screens were infinitely far away; in the Oculus Rift DK2, they are about 4.5 feet (1.4m) away.

Note: The above discussion assumes ideal lenses. The lenses used in modern HMDs are not ideal, and while that does not affect the core principle of virtual screens, there are side effects, specifically geometric distortion and chromatic aberration, which we will discuss in another post.

Hi Oliver,

thanks for explaining this so clearly.

Do you know if age changes anything to the comfort and health of the viewer?

I’m thinking children first, but elders too.

Cheers

Amaury

There’s some concern that using HMDs might negatively affect development of 3D vision in very young children, but it’s not directly related to the topics I’m discussing here. The issue is that HMDs don’t correctly simulate focus distance. No matter how far away a virtual object is, its image always appears at the optical distance of the virtual screen. This conflict between accommodation and all other depth cues might delay or hinder development of accommodation/vergence coupling.

Other than that, there isn’t really anything age-related I can think of.

Thanks

Why do we need children to develop the ability to deal with the real world when they are going to be living their lives in VR paradise?

(jokes)

Fair concern. And also great article.

Has there been any work with “dynamic lenses” ? It seems theoretically possible that if you have a sealed bag of clear fluid that you could precisely morph it into lenses that have different focal points. Not sure if this would be useful/beneficial for HMDs, just wondering if there has been any research done in this area. Reading the article on cillary muscles your pointed me to got thinking along this vein (or artery, as the case may be).

Yes, research has been done and there’s at least one company selling (expensive!) eyeglasses using exactly this principle of deforming a bag of clear liquid:

https://adlens.com/technology/variable-power-optics/

http://www.zdnet.com/article/superfocus-the-ultimate-eyeglasses/

“in the Oculus Rift DK1, for example, the virtual screens were infinitely far away; in the Oculus Rift DK2, they are about 4.5 feet (1.4m) away.”

Please ELI5 how this works, given that in either HMD you can appear to look at objects that are much closer or much further than 4.5m away.

Repost of a comment I made on reddit:

The focus issue in VR is like this, in a nutshell. For the sake of discussion, assume an HMD where the virtual screen is at infinity (Rift DK1).

In short, you can still look at objects that are at different distances than your HMD’s virtual screen, but you will see them as blurry, because your eyes will have accommodated to the wrong distance. This is the one remaining fundamental difference between 3D vision in virtual reality and real reality. There are multiple potential solutions, near-eye light field displays being the most promising.

Has there been any research done on that old belief that TV screens and such are bad for your eyes?

And btw, how far are current HMDs from being bright enough to damage people’s eyes? If one suddenly went from full black to full white, how would it compare to an eye-safe laser?

Remember that the natural light of being outside is probably quite a bit brighter (and more radiation filled!) than anything a HMD can produce. They’re all photons. Their range, from full black to full white, is not actually that much, but it’s still perfectly satisfactory

And by their range, I mean the range of the HMD, not the photons 🙂

For any interested parties, here is a description of a more complex (dual-lens) optic system for HMDs. In this particular case, it is for the OSVR HDK

http://www.roadtovr.com/sensics-ceo-yuval-boger-dual-element-optics-osvr-hdk-vr-headset/

Great post. Let’s hope that lightfield technology will allow for replacing the traditional LC/OLE displays at some point in the future.

it’s pretty obvious this is gong to hurt eyesight massively. just google myopia crisis asia– everyone who uses VR glasses now is going to go blind or near blind in 10 years. it’s ridiculous how much fake and damaging marketing hype is going in. Oculus is going to be sued massively in 10 years. shame everyone is blind by then :/

You sound concerned.

So I googled “myopia crisis asia,” and it’s a troubling phenomenon. There appear to be two hypotheses for the cause: lack of exposure to daylight during childhood (which may reduce the release of a hormone that may control eyeball growth), and recent increases in the amount of “near work,” i.e., too much focusing on close-by objects such as computer screens.

I’m not getting at all how you got from that to “VR is going to make everyone blind.” You might have to provide some research or at least a reasonable cause-and-effect chain.

If you had read and understood this very article, you’d know that current VR headsets (except Oculus Rift DK2) are focused at infinity, meaning they don’t trigger the second hypothesis. As for the first, one proposed remedy is to shine bright light into children’s eyes. Sort of like what VR headsets do.

Pingback: Accommodation and Vergence in Head-mounted Displays | Doc-Ok.org

Pingback: Measuring the Effective Resolution of Head-mounted Displays | Doc-Ok.org

Pingback: How Does VR Create the Illusion of Reality? | Doc-Ok.org