I wrote an article earlier this year in which I looked closely at the physical display resolution of VR headsets, measured in pixels/degree, and how that resolution changes across the field of view of a headset due to non-linear effects from tangent-space rendering and lens distortion. Unfortunately, back then I only did the analysis for the HTC Vive. In the meantime I got access to an Oculus Rift, and was able to extract the necessary raw data from it — after spending some significant effort, more on that later.

With these new results, it is time for a follow-up post where I re-create the HTC Vive graphs from the previous article with a new method that makes them easier to read, and where I compare display properties between the two main PC-based headsets. Without further ado, here are the goods.

HTC Vive

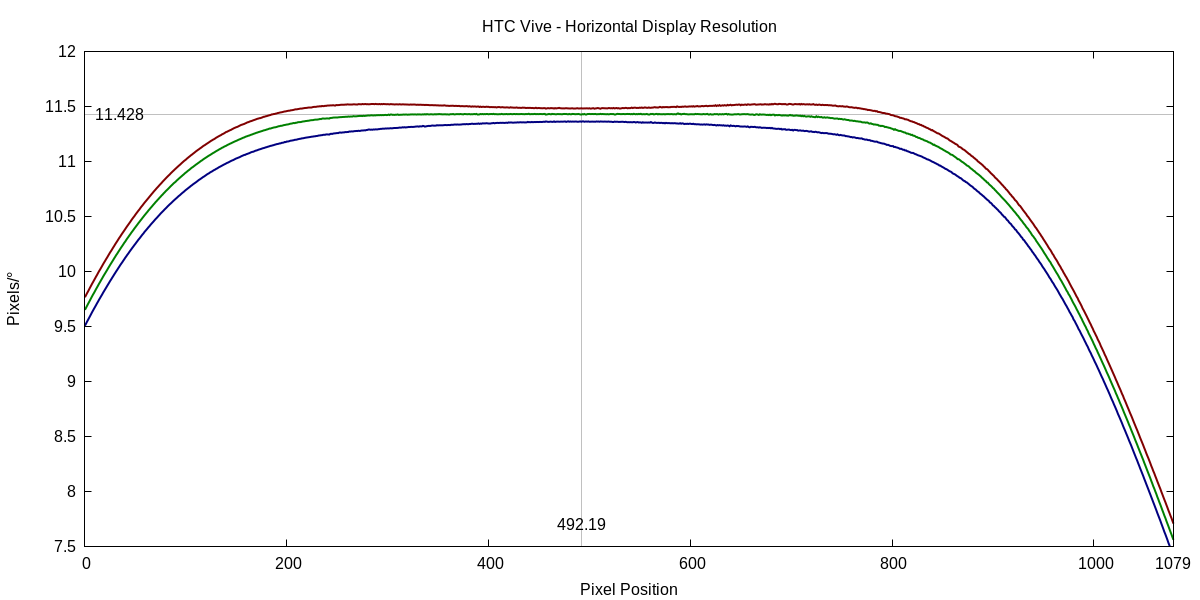

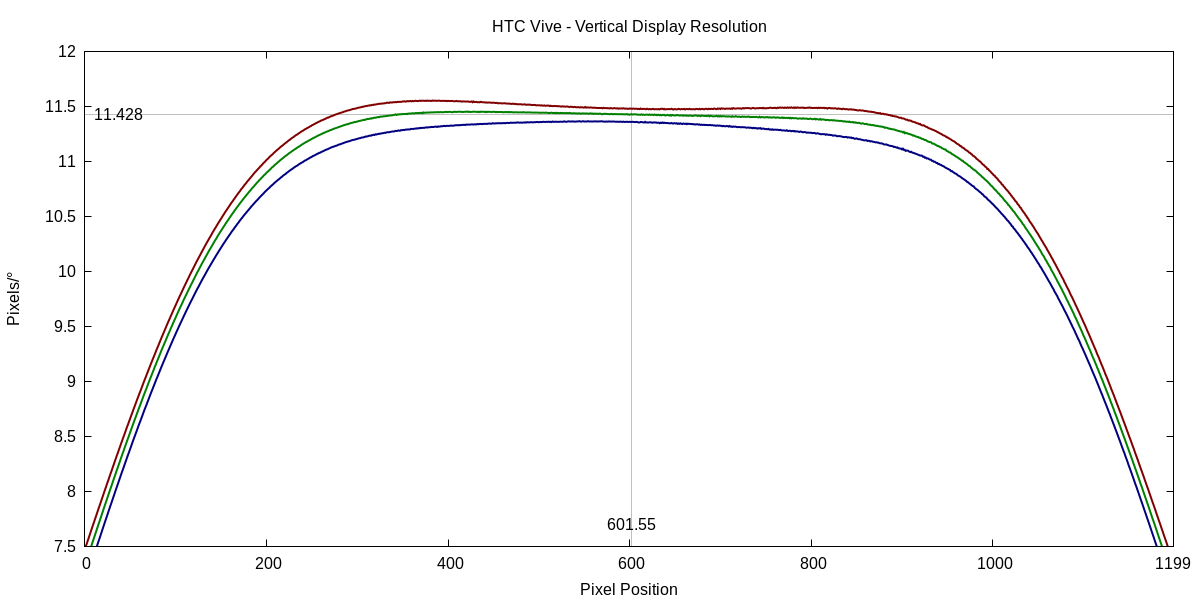

The first two figures, 1 and 2, show display resolution in pixels/°, on one horizontal and one vertical cross-section through the lens center of my Vive’s right display.

Figure 1: Display resolution in pixels/° along a horizontal line through the right display’s lens center of an HTC Vive.

Figure 2: Display resolution in pixels/° along a vertical line through the right display’s lens center of an HTC Vive.

What is obvious from these figures is that display resolution is not constant across the entire display, and different for each of the primary colors. See the previous article for a detailed explanation of these observations. On the other hand, there is a large “flat area” around the lens center in which the resolution is more or less constant. Therefore, I propose the green-channel resolution at the exact center of the lens as a convenient single measure of the “true” resolution of a VR headset.

It is important to note that the shown resolution of the red and blue color channels is nominal resolution, i.e., the number of pixels per degree as rendered. Due to the Vive’s (and Rift’s) PenTile sub-pixel layout, the effective sub-pixel resolution of those two channels as displayed is lower by a factor of sqrt(2)/2=0.7071, as the red and blue channels use a pixel grid that is rotated by 45° with respect to the green channel’s pixel grid. (This is a simplification of the real difference, but going into that would require an entire other article. Let’s just say that Sampling Theory is complex and counter-intuitive and leave it at that for now.)

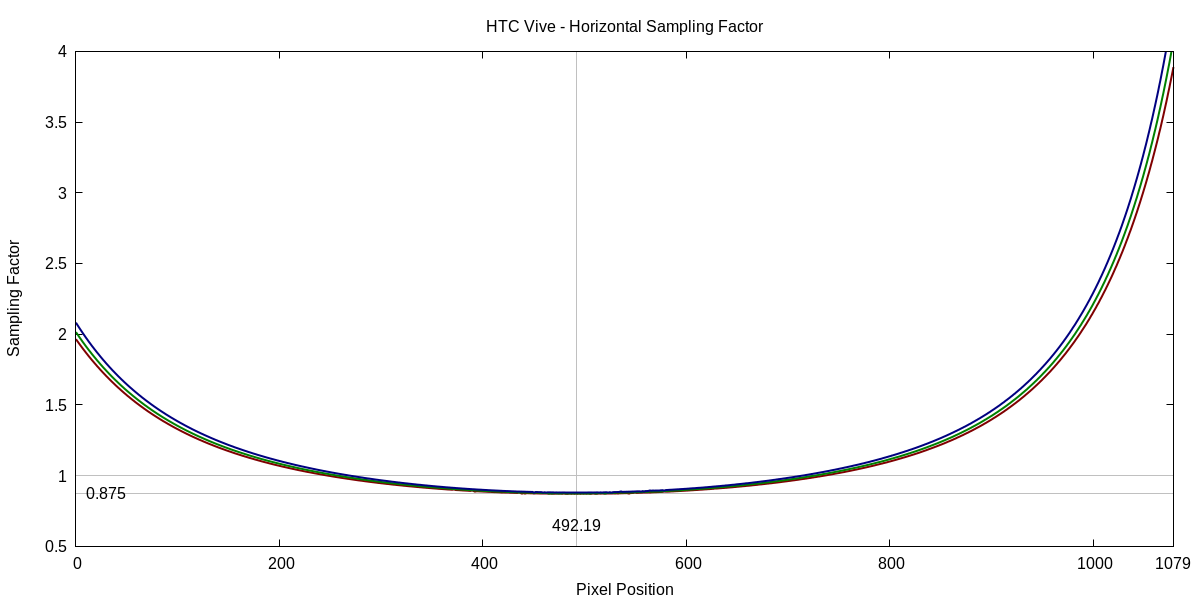

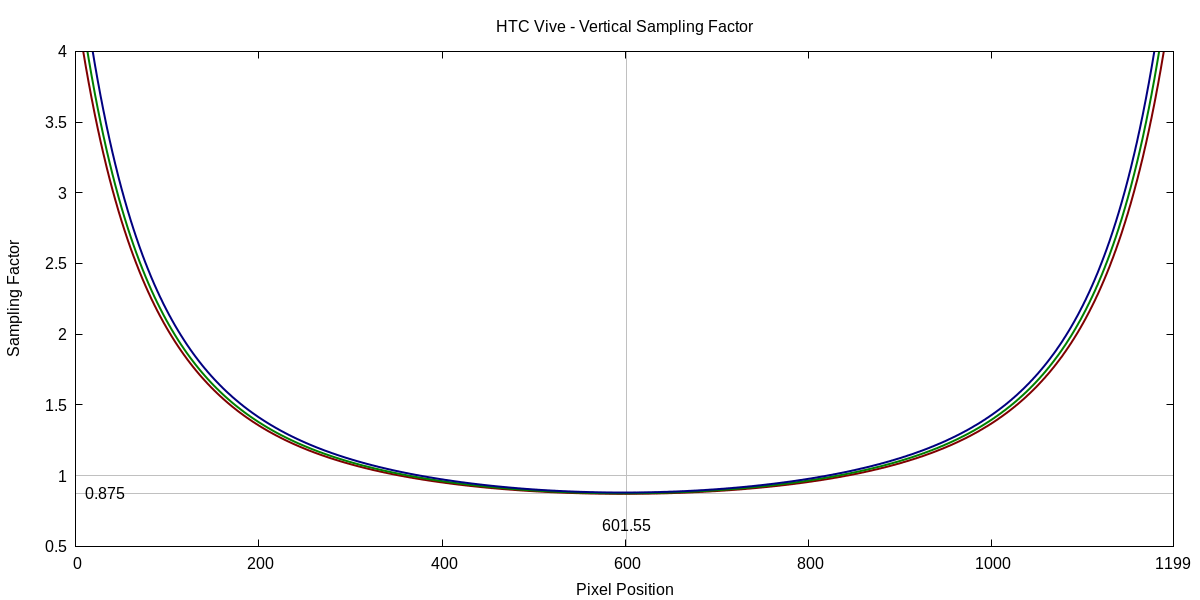

The second set of figures, 3 and 4, show the size relationship between display pixels and pixels on the intermediate tangent-space render target using the default render target size (1512×1680 pixels). Factors smaller than 1 indicate that display pixels are smaller than intermediate pixels, and factors larger than 1 indicate that one display pixel covers multiple intermediate pixels. Again, refer to the previous article for an explanation of tangent-space rendering and post-rendering lens distortion correction.

Figure 3: Sampling factor between display and intermediate render target along a horizontal line through the right display’s lens center of an HTC Vive.

Figure 4: Sampling factor between display and intermediate render target along a vertical line through the right display’s lens center of an HTC Vive.

These sampling factors are an important consideration in designing a VR rendering pipeline. A high factor like the 4.0 at the upper and lower edges of the display (see Figure 4) means that the renderer has to draw four pixels (at the horizontal lens center), or up to 16 pixels at one of the image corners, to generate a single display pixel. Not only is this a lot of wasted effort, but it can also cause aliasing during the lens correction step. The standard bilinear filter used in texture mapping does not cope well with high sampling factors, and especially highly anisotropic sampling factors such as 4:1, requiring a move to more complex and costly higher-order filters. Rendering tricks such as lens-matched shading are one approach to maintain most of the standard 3D rendering pipeline without requiring high sample factors.

Interestingly, at standard render target size, the sampling factor around the center of the lens is smaller than 1.0, meaning the render target is slightly lower resolution than the actual display. This was probably chosen to create a larger region of the field of view where display and intermediate pixels are roughly the same size.

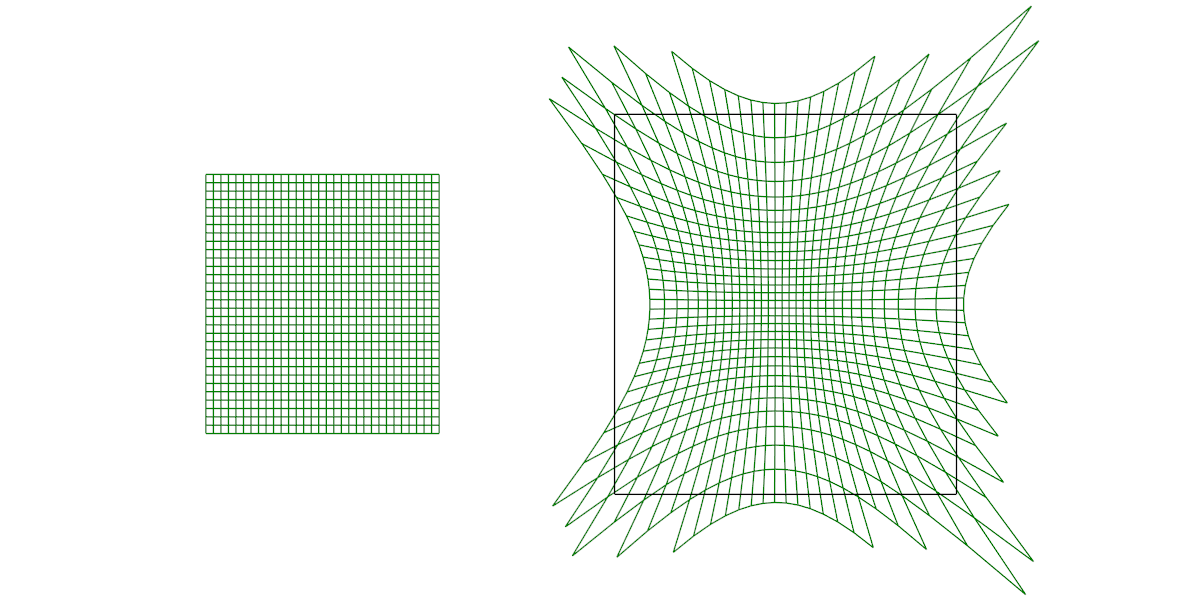

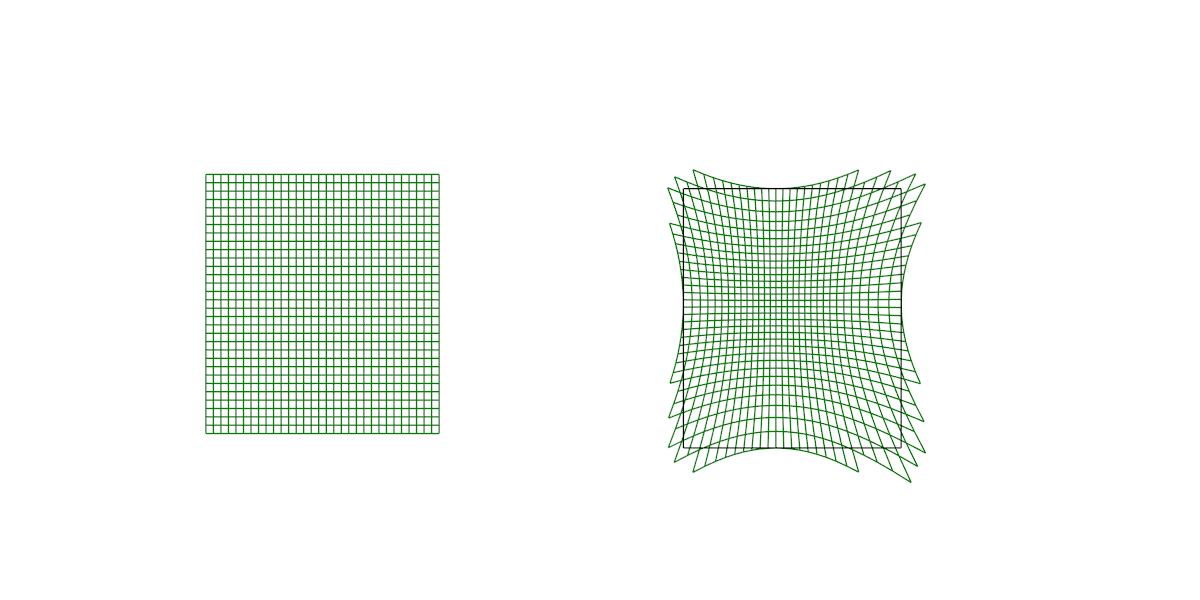

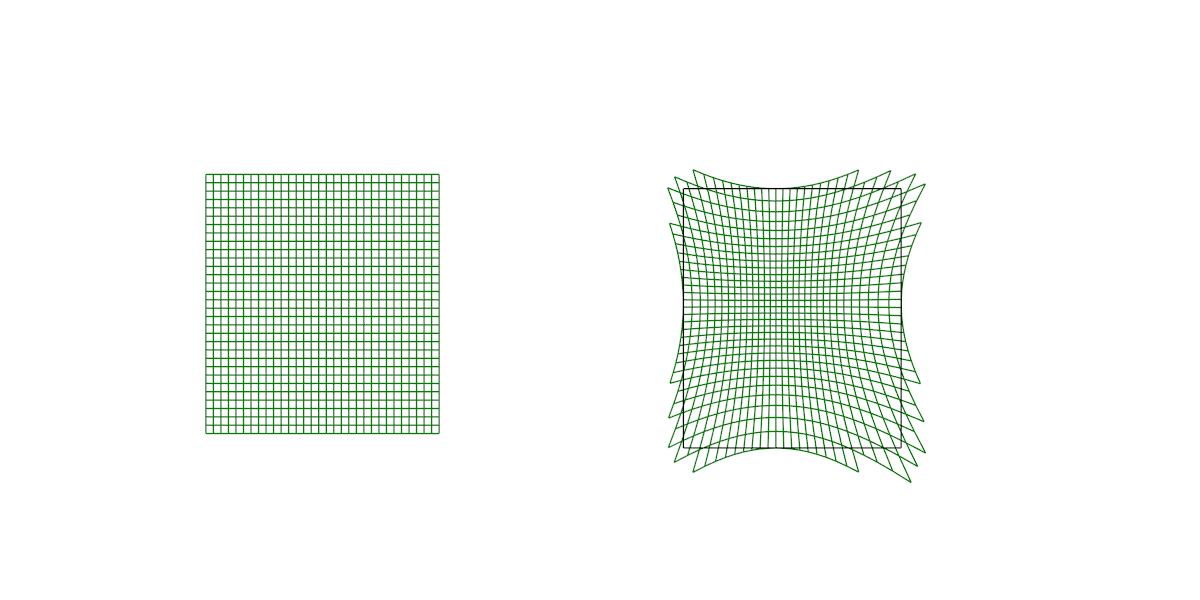

The last figure in this section, Figure 5, shows the lens distortion correction mesh and the spatial relationship between the actual display (left) and the intermediate render target (right). The black rectangle on the right denotes the tangent-space boundaries of the intermediate render target, i.e., the upper limit on the headset’s field of view.

Figure 5: Visualization of HTC Vive’s lens distortion correction. Left: Distortion correction mesh in display space. Right: Distortion correction mesh mapped to intermediate render target, drawn in tangent space. Only parts of the mesh intersecting the render target’s domain (black rectangle) are shown.

Note that in the Vive’s case, not only do some display pixels not show valid data, i.e., the parts of the distortion mesh that are mapped outside the black rectangle, but neither do all parts of the intermediate image contribute to the display. The render target overshoots the left edge of the display, leading to the lens-shaped “missing area” visible in the graph.

Oculus Rift

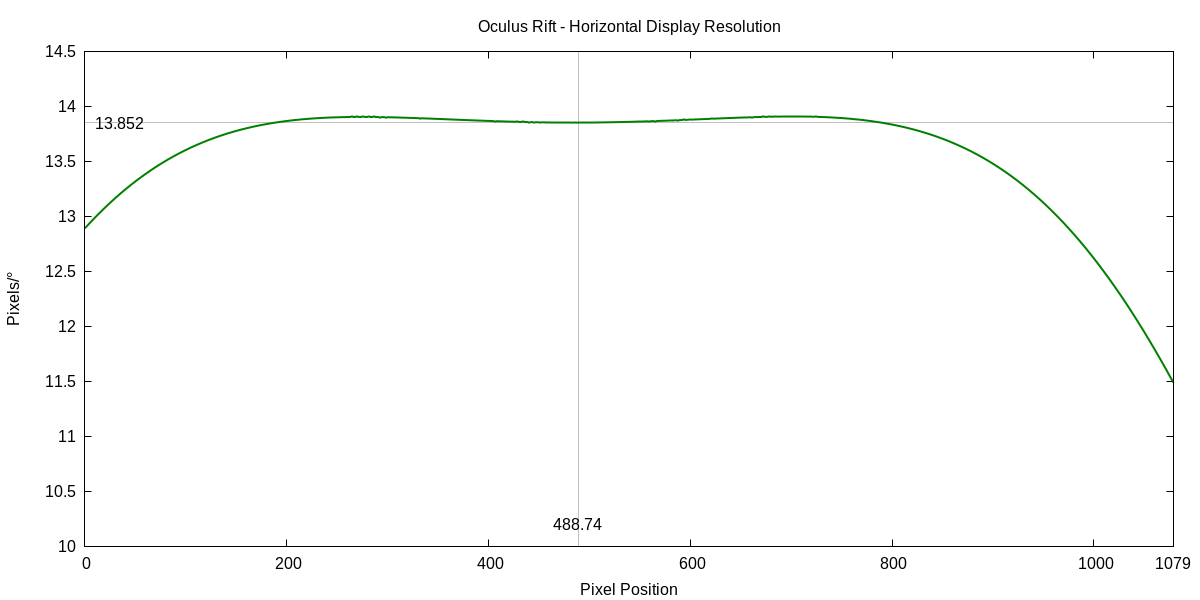

As above, the first two figures, 6 and 7, show display resolution in pixels/°, on one horizontal and one vertical cross-section through the lens center of one specific Oculus Rift’s right display.

Figure 6: Display resolution in pixels/° along a horizontal line through the right display’s lens center of an Oculus Rift.

Figure 7: Display resolution in pixels/° along a vertical line through the right display’s lens center of an Oculus Rift.

Unlike in the previous section, there is only a single resolution curve instead of one curve for each of the primary color channels. This is due to Oculus treating their lens distortion correction method as a trade secret. I had to pull some tricks to get even a single curve, as I am going to describe later. Unfortunately, I am not exactly sure to which primary color channel this single curve belongs. Based on how I reconstructed it, it would make sense for it to be the green channel, but based on the shape of the curve, it looks more like it is the red channel. Note the “double hump” around the lens center, where resolution goes up before it goes down. This is the same shape as the Vive’s red channel curve, but neither the Vive’s green nor blue channel curves show those humps. This will become a point in the comparison section below.

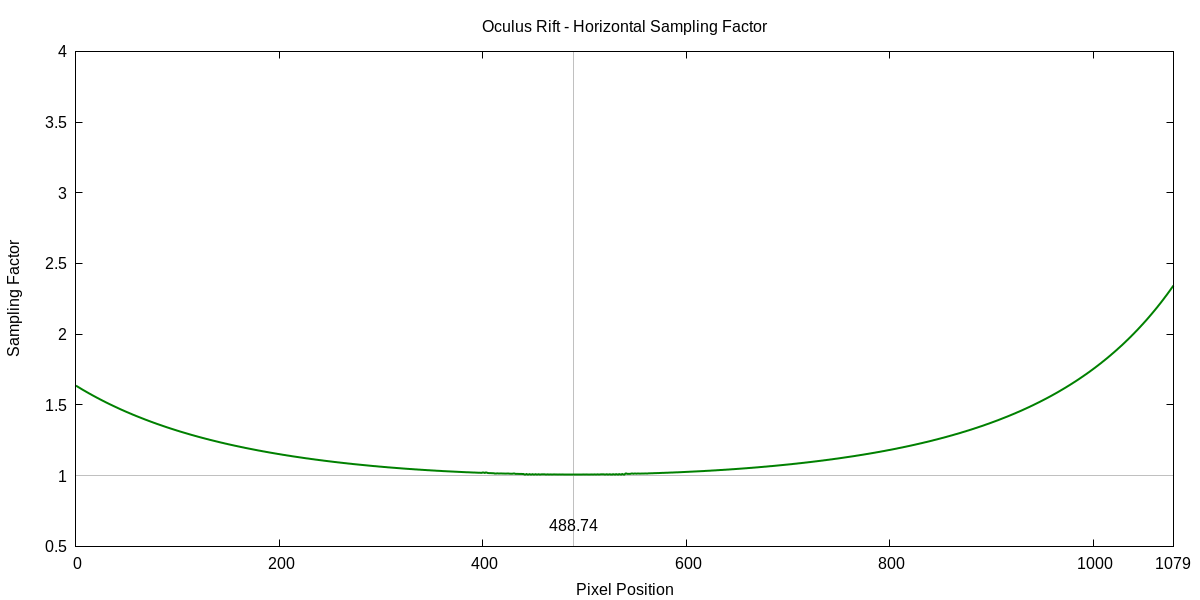

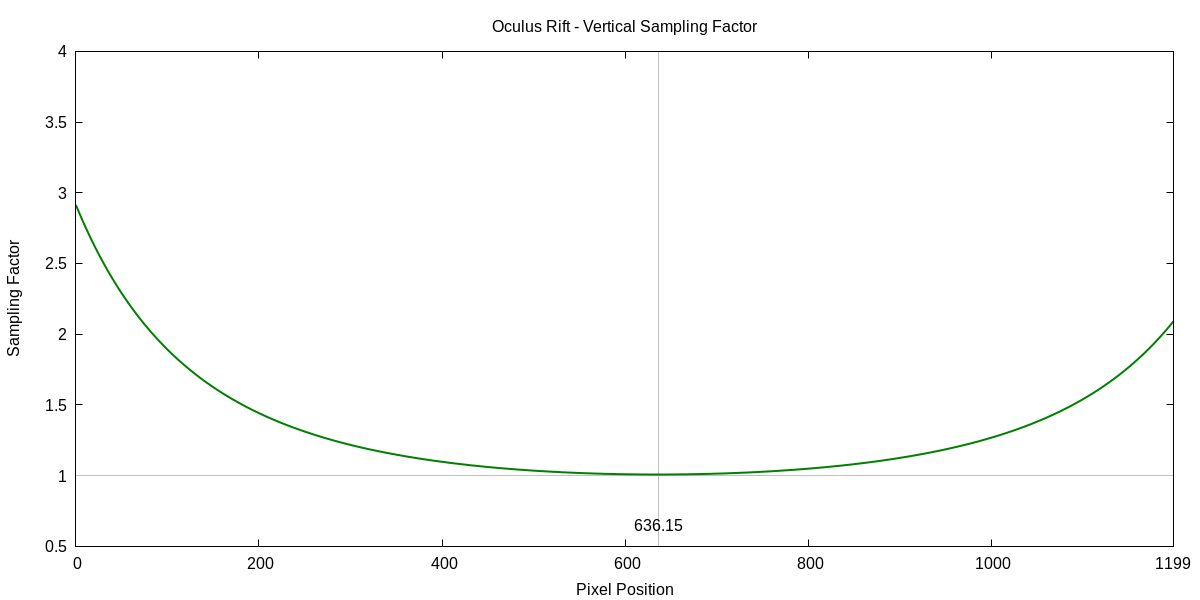

The next set of figures, 8 and 9, are again horizontal and vertical sampling factors, at the default render target size (1344×1600 in the Rift’s case).

Figure 8: Sampling factor between display and intermediate render target along a horizontal line through the right display’s lens center of an Oculus Rift.

Figure 9: Sampling factor between display and intermediate render target along a vertical line through the right display’s lens center of an Oculus Rift.

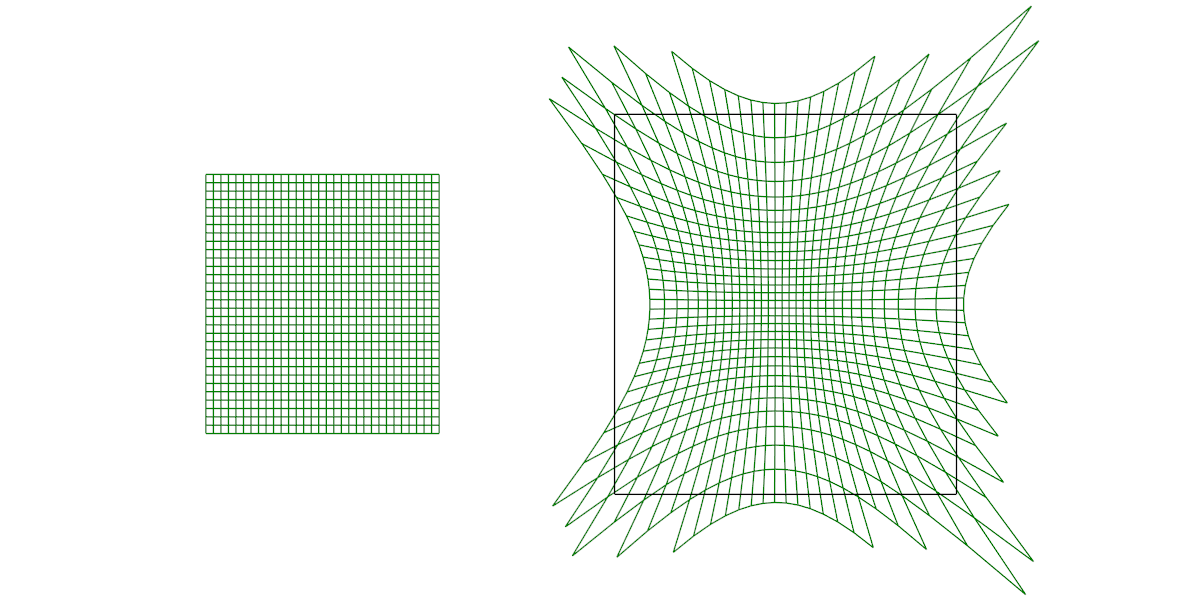

The final figure, Figure 10, is as before a visualization of the lens distortion correction step used at the end of the Rift’s rendering pipeline.

Figure 10: Visualization of Oculus Rift’s lens distortion correction. Left: Distortion correction mesh in display space. Right: Distortion correction mesh mapped to intermediate render target, drawn in tangent space. Only parts of the mesh intersecting the render target’s domain (black rectangle) are shown.

Comparison

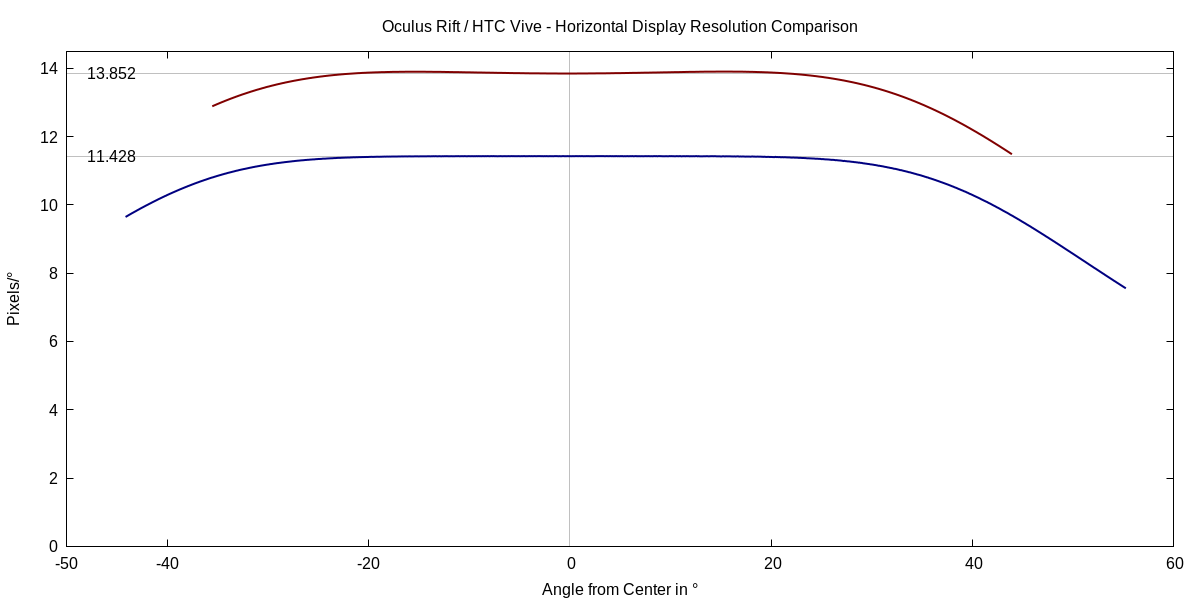

Based on the results from the two headsets, the most obvious difference between the optical properties of the HTC Vive and Oculus Rift is that Oculus traded reduced field of view for increased resolution. The “single number” resolution of the Oculus Rift, 13.852 pixels/°, is significantly higher than the HTC Vive’s. Under the assumption that the single curve I was able to extract represents the green channel, the Rift’s resolution is 21.2% higher than the Vive’s. Should the curve represent the red channel (as hinted at by the “double hump”), it would still be 20.6% higher (so no big deal).

The graphs in the previous sections, e.g., figures 1 and 6, show the change of resolution over the display’s pixel space, but that does not compare well between headsets due to their different fields of view. Drawing them in the same figure requires changing the horizontal axis from pixel space to, say, angle space, as in Figure 11.

Figure 11: Comparison of display resolution along horizontal axes through their respective lens centers between an HTC Vive and an Oculus Rift, drawn in angle space instead of pixel space.

Figure 11 also sheds light on the apparent difference in pixel sample factors between the two headsets. According to figures 3 and 4, the Vive’s rendering pipeline has to deal with a sampling factor dynamic range of 4.5 across the display (from 0.875 to 4.0), whereas the Rift only faces a range of 2.9. This is a second effect of the Rift’s reduced field of view (FoV). The Vive uses more of the periphery of the lens, where sampling factors rise dramatically. By staying closer to the center of the lens, the Rift avoids those dangerous areas.

Resolution and Field of View

Given that both headsets have displays with the same pixel count (1080×1200 per eye), it is clear that one pays for higher resolution with a concomitant reduction in FoV. Due to the non-linearities of tangent space rendering and lens distortion correction, there is, however, not a simple relationship between resolution and FoV.

Fortunately, knowing the tangent-space projection parameters of the intermediate render target and the coefficients used during lens distortion correction, one can calculate the precise fields of view and compare them directly. The first approach is to compare figures 5 and 10, which are drawn to the same scale and are replicated here for convenience:

Figure 5: Visualization of HTC Vive’s lens distortion correction. Left: Distortion correction mesh in display space. Right: Distortion correction mesh mapped to intermediate render target, drawn in tangent space. Only parts of the mesh intersecting the render target’s domain (black rectangle) are shown.

Figure 10: Visualization of Oculus Rift’s lens distortion correction. Left: Distortion correction mesh in display space. Right: Distortion correction mesh mapped to intermediate render target, drawn in tangent space. Only parts of the mesh intersecting the render target’s domain (black rectangle) are shown.

However, while they are to scale, comparing these two diagrams is somewhat misleading (which is why I did not attempt to overlay them on top of each other). They are drawn in tangent space, and while tangent space is important for the VR rendering pipeline, there is no linear relationship between differences in tangent space size and the resulting differences in observed FoV.

There is not a single way to draw headset FoV in a 2D diagram that works for all inquiries, but in this case, a better comparison could be drawn from transforming the FoV extents to polar coordinates, where each point on the display is drawn at its correct lateral angle from the central “forward” or 0° direction, and the distance from a display point to the central point is proportional to that point’s angle away from the 0° direction.

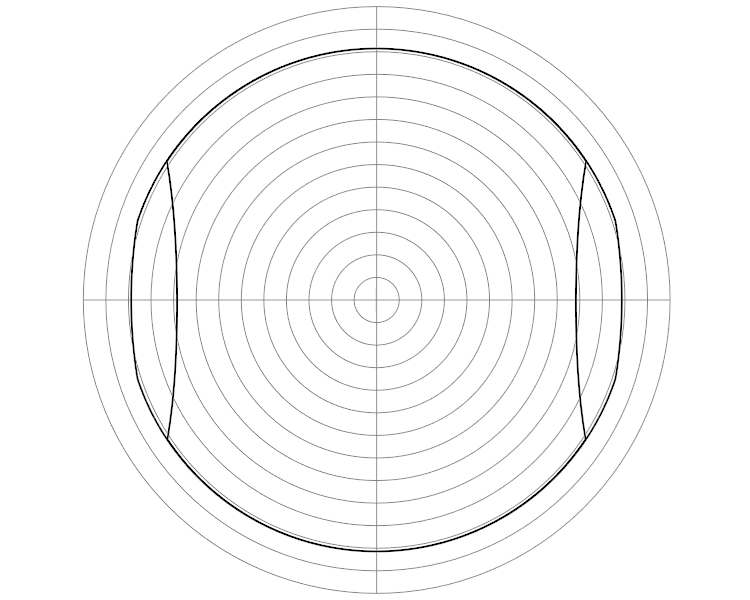

Figures 12 and 13 show the Vive’s and Rift’s FoVs, respectively, in polar coordinates. In the Vive’s case, Figure 11 does not show the full FoV area implied by the intermediate render target (minus the “missing area”, see Figure 5), as the Vive’s rendering pipeline does not draw to the entire intermediate target, but only to the inside of a circle around the forward direction.

Figure 12: Combined field of view (both eyes) of the HTC Vive in polar coordinates. Each grey circle indicates 5° away from the forward direction.

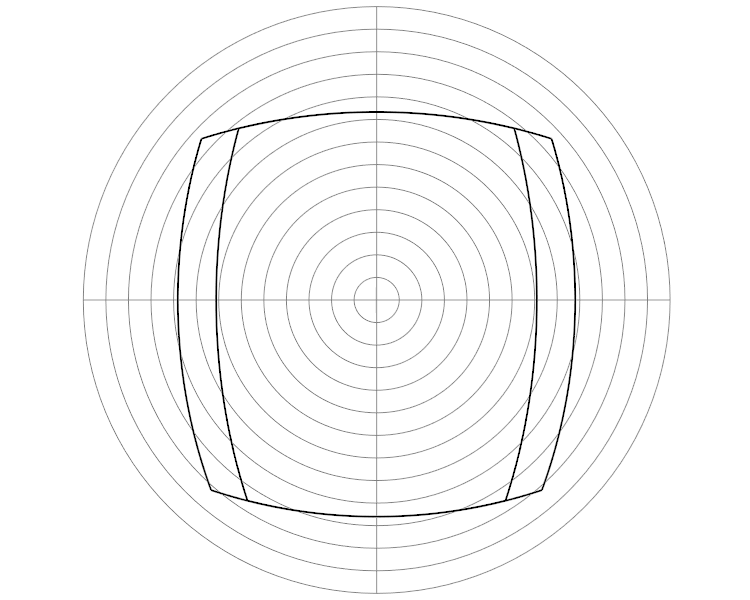

Figure 13: Combined field of view (both eyes) of the Oculus Rift in polar coordinates. Each grey circle indicates 5° away from the forward direction.

In addition, I am showing the combined field of view of both eyes for each headset, to illustrate the different amounts of binocular overlap between the two headsets. Figure 12 shows that the Vive’s overall FoV is roughly circular, with the lens-shaped “missing area” on the inside of either eye. Aside from that area, the per-eye fields of view overlap perfectly

Figure 13 shows that the Rift’s FoV is more rectangular, as it is using the entire rectangle of the intermediate render target, and has a distinct displacement between the two eyes, to increase total horizontal FoV at the cost of binocular overlap. Also of note is that the Rift’s FoV is asymmetric in the vertical direction. The screens are slightly shifted downward behind the lenses, to better place the somewhat smaller FoV in the user’s overall field of vision.

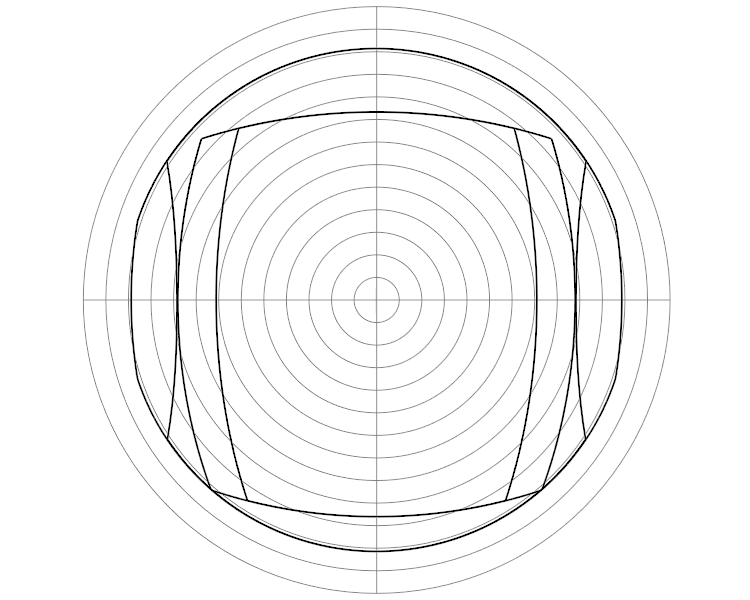

Unlike in tangent space, it does make sense to directly compare these polar-coordinate diagrams, and I am doing so in Figure 14:

Figure 14: The fields of view of the HTC Vive and Oculus Rift overlaid in polar coordinates. Each grey circle indicates 5° away from the forward direction.

Notably, the Rift’s entire binocular FoV, including the non-stereoscopic side regions, is exactly contained within the Vive’s binocular overlap area.

Extracting the Oculus Rift’s Lens Distortion Correction Coefficients

As I mentioned in the beginning, Oculus are treating the Rift’s lens distortion correction method as a trade secret. Unlike the Vive, which, through OpenVR, offers a function to calculate the lens-corrected tangent space position of any point on the display, Oculus’ VR run-time does all post-rendering processing under the hood.

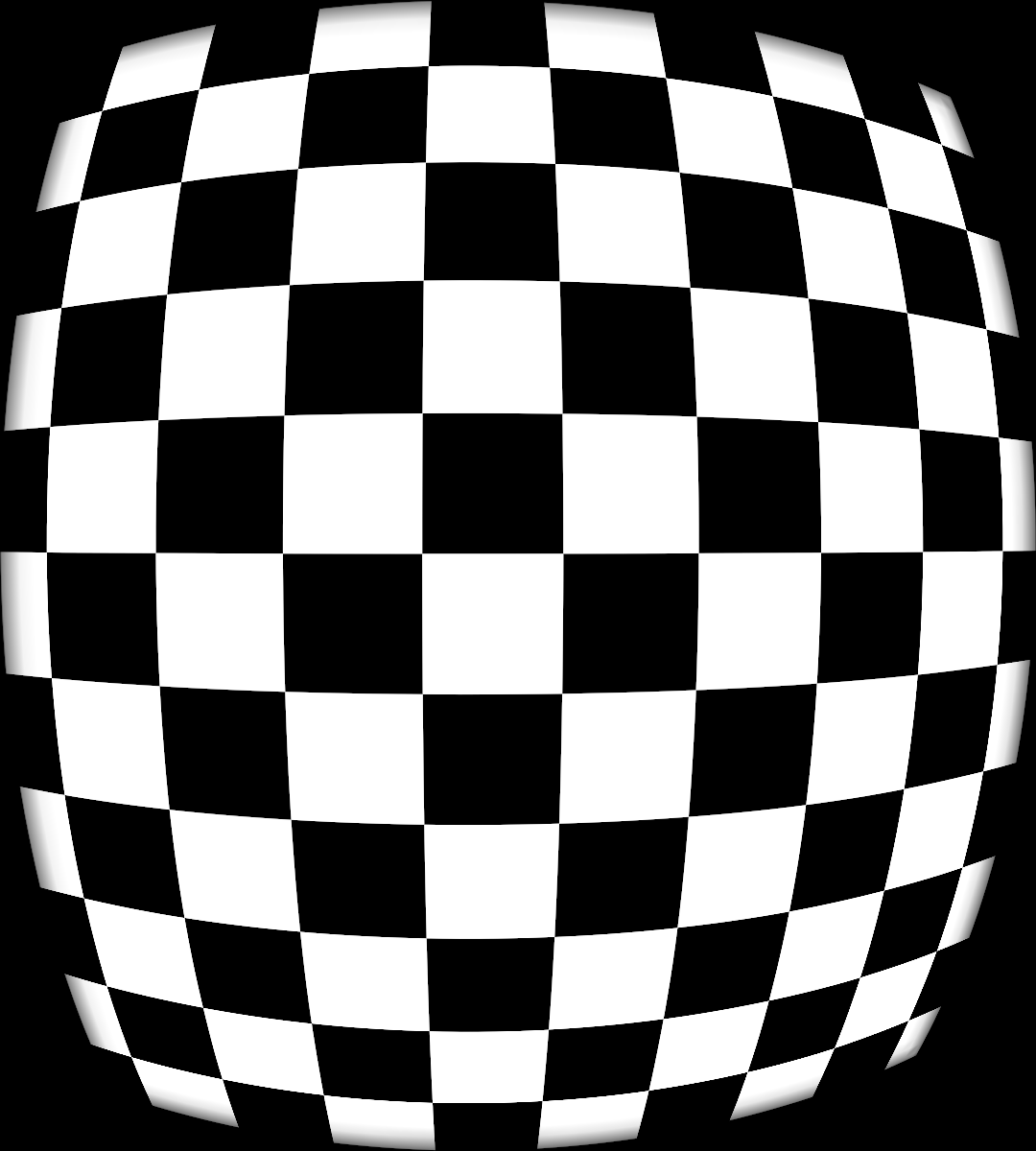

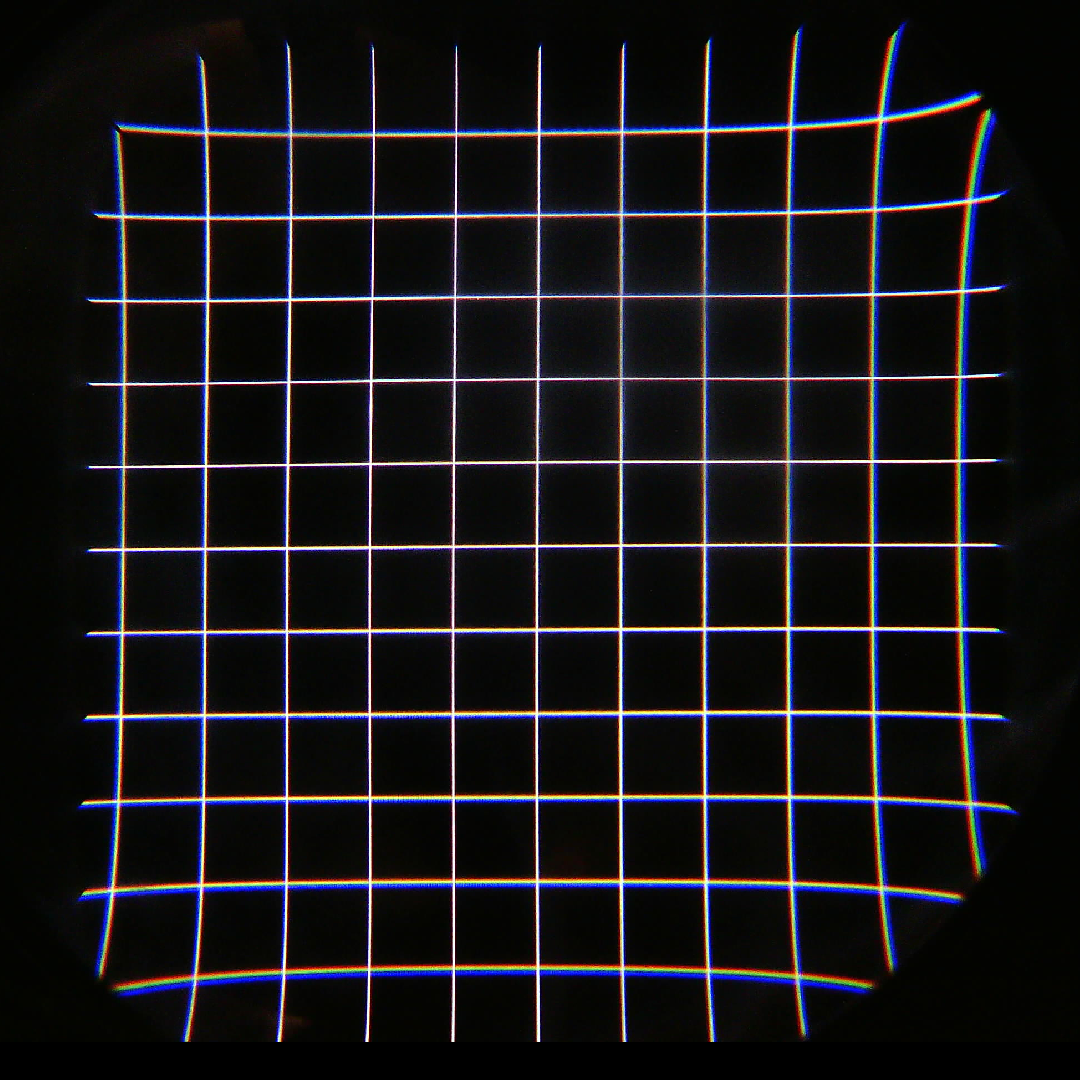

So how did I generate the data in figures 6-10 and 13? The hard way, that’s how. I wrote a small libOVR-based VR application that ignored 3D and head tracking and all that and simply rendered a checkerboard in tangent space, directly into the intermediate rendering target. Then I used a debugging mode in libOVR mirror window functionality to show a barrel-distorted view of that checkerboard on the computer’s main monitor, where I took a screen shot (see Figure 15).

Figure 15: Screen shot from libOVR mirror window, showing a barrel-distorted rendering of a checkerboard drawn directly in tangent space. Unfortunately, the image shows no sign of chromatic aberration correction.

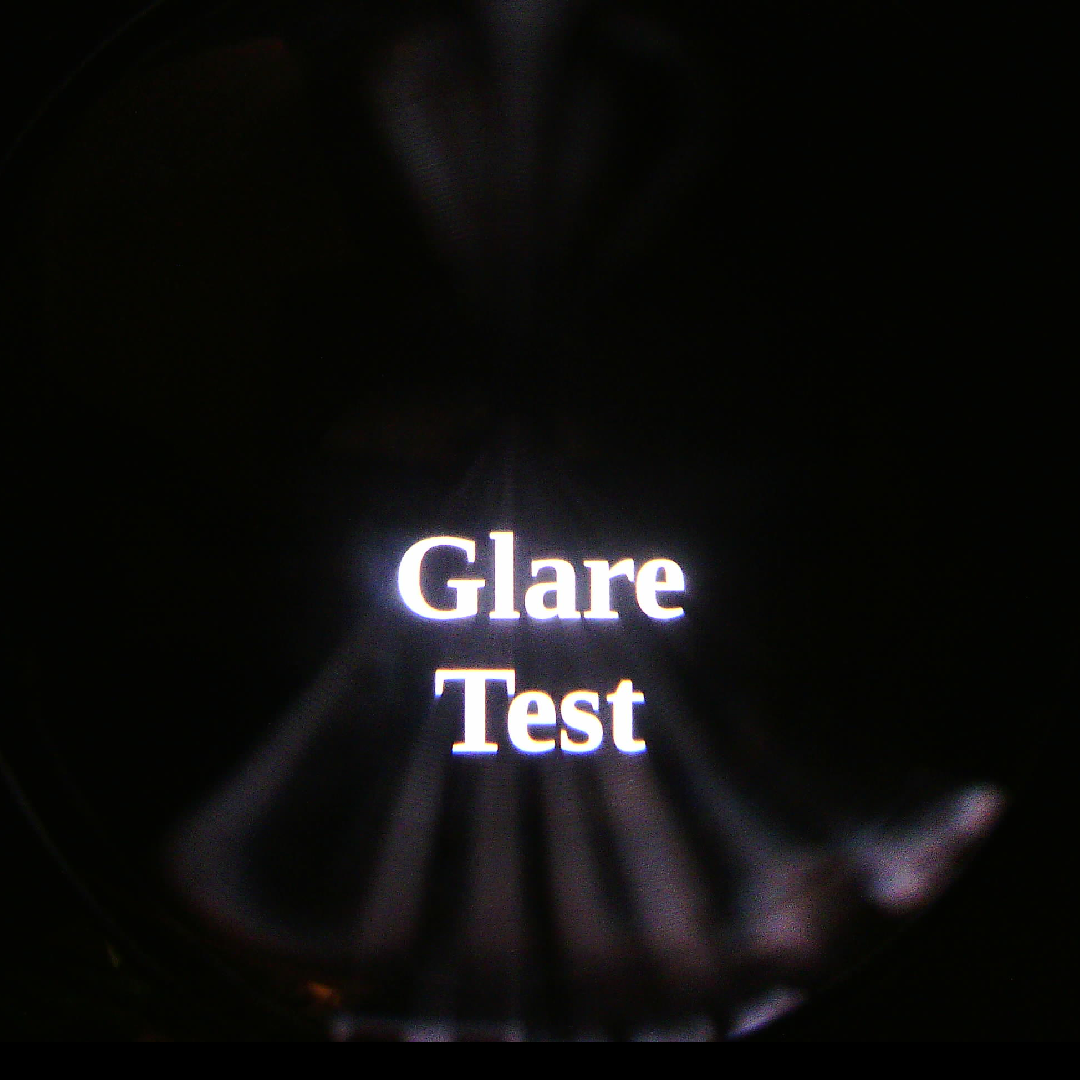

To my surprise, the barrel-distorted view did not show any signs of chromatic aberration correction. So it was not, as I had hoped, a direct copy of the image painted to the Rift’s display (see Figure 16 for proof that the Rift’s lenses do suffer from chromatic aberration), but some artificial recreation thereof, potentially generated with a different set of lens distortion coefficients. Even in the best case, this meant I was not going to be able to create separate resolution curves for the three primary color channels.

I barreled ahead nonetheless, and since the results I got made sense, I assume there is at least some relationship between this debugging output and the real display image. Next, I fed the screen shot into my lens distortion correction utility, which extracted the interior corner points of the distorted grid, and then ran those corner points through a non-linear optimization procedure that, in the end, spat out a set of distortion correction coefficients that mapped the input image to the original drawing I had made in tangent space to about 0.5 pixels tolerance. With those correction coefficients in hand, plus the tangent-space projection parameters given to me by libOVR, I was able to generate the diagrams and figures I needed.

Figure 16: Through-the-lens photo of Oculus Rift’s display, showing a calibration grid. There are noticeable color fringes from chromatic aberration, meaning that Oculus’ rendering pipeline does correct for it, but does not reflect that in the debugging mirror window mode.

And while I had the Rift strapped into my camera rig, I also took the shot I couldn’t take when I wrote this article about the optical properties of then-current VR HMDs a long time ago. Here it is, the Oculus Rift Glare Test:

Is that 13.852 pixels/ degree a horizontal measurement? Or is it vertical or diagonal?

Ah nvm. You have it stated in the graph.

Both horizontal and vertical. At the lens center, resolution is the same in either direction. Away from the lens center, resolution is finer along the circumference of a circle through a point than along a radius through that same point, because lens distortion is primarily radial.

Go lenses fail miserably next to wearality. It has aliasing effects and chromatic aberration issues conquered by other solutions. Wearality chromatic aberration https://imgur.com/gallery/vQEw9Jv

https://m.imgur.com/gallery/aaaH3ny

Sitesinvr.com for reference data.

Second question, why not use an area based comparison (IE pixels/degree^2)? Is Do you feel the linear comparison is more descriptive of the experience?

The short of it is that resolution measured in pixels / linear unit (mm / inches / degrees) is more aligned with perceptual quality improvements. Twice the resolution -> looks twice as clear. Twice the resolution -> can read text of half the font size.

Thanks for your perspective! Really enjoying your well researched articles man, thank you for putting all this info out there.

You could display a green checkerboard to remove doubt about color channels.

I don’t think that would resolve it. The test image I used was black and white, meaning it included all three color channels. The result I got from the debug mirror window was also black and white, without any color fringes, meaning barrel distortion was applied uniformly for all color channels. It would be very strange if the algorithm treated a source image that only had color in a single channel differently than a source image with color in all three channels.

As always, your write ups are great! Thank you for that.

I was able to characterize the chromatic aberration using a similar method to yours. To capture the each color component distortion, I generated a similar grid for each component color and captured the 1:1 2160×1200 video frame that is sent to the Oculus device with a frame grabber.

That would do it. Unfortunately, I don’t have a frame grabber.

If you shared a link to those images here then perhaps the author could update the article. I definitely would be curious how similar the distortion is between the preview and the actual image.

Really appreciating the thoroughness of your tests here. Have you had a chance to try out a Vive Pro yet? Subjectively and anecdotally, it seems to have significantly better resolution than the 1st-gen Vive. But I’d love to see what the real numbers are…

Perhaps the math or reality doesn’t work this way, but would it be possible to map the display resolution over top of the polar-coordinate graph using something like color-coded rainfall maps / heatmaps? I’m thinking especially of brightness uniformity diagrams when people take a meter to a new LCD panel, but it seems like this might be a way to take the linear information presented in the first few graphs and map it to the (extremely helpful) polar-coordinate graphs being used to represent the FoV.

No, that’s entirely possible. I’d have to use a single measure of resolution that takes anisotropy away from the lens center into account, and it would be a lot of work, but I agree it would be useful.

Pingback: #170 F8 & VRLA; Oculus Go & Lenovo Mirage Solo | VR Digest

Figure 11 could really use a legend. It is not intuitive which of the two headsets is the red and which the blue.

The curve that touches the “13.852 pixels/°” line belongs to the headset that has a resolution of 13.852 pixels/°, and the curve that touches the “11.428 pixels/°” line belongs to the headset that has a resolution of 11.428 pixels/°.

Please compare the oculus go to the mirage solo with this angle based methodology. I have to agree with Ben Lang when he criticizes the lack of innovation and advancement of certain devices at this stage of the release cycle.

sooo what’s the vertical fov of the vive/vive pro and rift …..I’m not finding that information

See Figures 12 and 13.

Good work. But image over lenses with Oculus have zoom on 4% in compare to Vive. And then resolution is 13.27 pixel/degree (oculus better only 16.4%, not 21.2%, just shoot over lenses and compare pixel size). I did compare resolution all PC HMD over lenses (oculus, vive, lenovo, odyssey, Pimax 5k+, PSVR).