I’ve previously written about our low-cost VR environments based on 3D TVs and optical tracking. While “low-cost” compared to something like a CAVE, they are still not exactly cheap (around $7000 all told), and not exactly easy to install.

What I haven’t mentioned before is that we have an even lower-cost, and, more importantly, easier to install, alternative using just a 3D TV and a Razer Hydra gaming input device. These environments are not holographic because they don’t have head tracking, but they are still very usable for a large variety of 3D applications. We have several of these systems in production use, and demonstrated them to the public twice, in our booth at the 2011 and 2012 AGU fall meetings. What we found there is that the environments are very easy to use; random visitors walking into our booth and picking up the controllers were able to control fairly complex software in a matter of minutes.

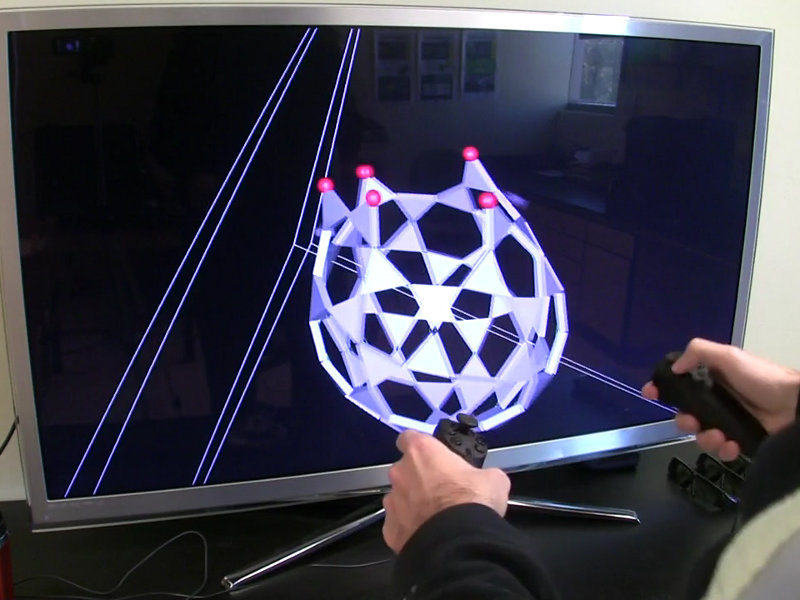

A user controlling a low-cost 3D display (running the Nanotech Construction Kit) with a Razer Hydra 6-DOF tracked input device.

Unlike the Wiimote or the Playstation Move, the Razer Hydra uses electromagnetic tracking. Via a collection of magnetic coils, three in the base station and three in each handheld device, it can calculate the position and orientation of each device relatively to the base station. This is one of the oldest tracking technologies around — all original CAVEs and similar environments used magnetic tracking, either the Polhemus Fastrak or Ascension Flock of Birds devices — but it is the first time that this technology has been implemented on a gaming budget (around $150).

The common problem with magnetic tracking is field distortion. Any metal in the vicinity will distort the magnetic field, and cause offsets between a device’s actual and measured positions. These offsets vary — sometimes tremendously — throughout the tracking space, and also over time, for example if users have keys in their pockets. In high-end installations, it is possible to calibrate for the presence of metal by measuring the tracking field at a large number of 3D positions, and calculating position-dependent correction factors, but this cannot be expected from a typical end user who bought a device and just wants to use it.

A way around the distortion issue is to relax the usual requirement that the physical and virtual positions of a tracked device exactly coincide. The difference is very similar to that between a mouse and a touchscreen: when a user wants to press a GUI button using a touchscreen, she simply has to reach out, and place her finger on the button. Using a mouse instead, she has to grab the mouse (which is on the desktop, and not on the monitor, therefore quite a bit away and moving in a different plane), move the physical mouse until the mouse’s virtual representation, i.e., the mouse cursor, lands on the button, and then press a mouse button. We all have done that so much that it has become second nature, but looking at if from the outside, it is a fundamental difference. The same is true with absolutely tracked devices and relatively tracked devices such as the Razer Hydra: instead of touching a virtual object with the physical device itself, the user has to move her hands around until the virtual representation, say a cone, touches the virtual object.

While training can alleviate almost all of it, there is a noticeable difference in usability between these two approaches. But since it does work very well after training, and the device is really cheap, there is no complaining about it.

With that in mind, the setup of such a system is very simple. Put a 3D TV onto a desk, place the Razer Hydra’s base station a few inches in front of the monitor, and connect it to the computer. Then, if the VR software has been pre-configured to use a Razer Hydra device, simply start using it. More advanced users might want to fine-tune their setup, particularly the offset between the handles’ physical and virtual positions to better suit their work habits, but those steps are optional.

In our software, a few default tools are bound to the device on application start-up. One button on each handle is used to pick up and move world space, in position and orientation. If both buttons are pressed together, users can scale world space by pulling their hands apart or pushing them together. One more button on each handle is used to bring up an application’s main menu, and interact with GUI elements already on the screen. All other buttons can dynamically be assigned for arbitrary application functionality, such as grabbing and moving atoms in the Nanotech Construction Kit, as shown in this video:

The total cost for such an environment is for the computer running it, i.e., around $1000 dollars for a very powerful one, for the 3D TV, as low as around $1000 for smaller ones depending on deals, or around $1700 for a 65″ one, and $150 for the Razer Hydra itself. This is a pretty good starter environment for serious use. And, even better, there is a smooth upgrade path to a holographic low-cost 3D display, by adding and calibrating an optical tracking system.