Boy, is my face red. I just uploaded two videos about intrinsic Kinect calibration to YouTube, and wrote two blog posts about intrinsic and extrinsic calibration, respectively, and now I find out that the factory calibration data I’ve always suspected was stored in the Kinect’s non-volatile RAM has actually been reverse-engineered. With the official Microsoft SDK out that should definitely not have been a surprise. Oh, well, my excuse is I’ve been focusing on other things lately.

So, how good is it? A bit too early to tell, because some bits and pieces are still not understood, but here’s what I know already. As I mentioned in the post on intrinsic calibration, there are several required pieces of calibration data:

- 2D lens distortion correction for the color camera.

- 2D lens distortion correction for the virtual depth camera.

- Non-linear depth correction (caused by IR camera lens distortion) for the virtual depth camera.

- Conversion formula from (depth-corrected) raw disparity values (what’s in the Kinect’s depth frames) to camera-space Z values.

- Unprojection matrix for the virtual depth camera, to map depth pixels out into camera-aligned 3D space.

- Projection matrix to map lens-corrected color pixels onto the unprojected depth image.

Of these components, number 4 was the easiest to find. The calibration data contains measurements of the Kinect’s camera layout, and those are all that’s needed to convert from raw disparity to Z value. I grabbed those data from my current Kinect, and they are similar to the same values I created using my own calibration procedure. There are significant differences, but at least I’m certain that I’m extracting and parsing the calibration parameters correctly (see below for some analysis).

Number 5 is a mite fuzzier. Those same layout measurements also imply an unprojection matrix, under the assumption that the virtual depth camera’s pixel grid is exactly upright and square. Since that’s usually not the case, there might be some undiscovered parameters describing pixel skew and aspect ratio. But the unprojection matrix I created from the known values was very close to the one I created myself, minus a small skew component and a tiny deviation in aspect ratio.

There is no sign of number 3 anywhere in the decoded data. The Kinect’s depth camera has very obvious non-linear depth distortion, especially in the corners of the depth image, and correcting for that is a very simple procedure. In the calibration data, the correction would most probably be represented by two sets of coefficients for bivariate polynomials, but I didn’t see any evidence of that.

When someone asked me about factory calibration in the comments on the calibration video, I guessed that the focus would be primarily on mapping the color image onto the depth image, and less on accurately mapping the depth image back out into 3D space. While that’s the most important point for me, it’s not useful for the Kinect’s intended purpose, and that might be why it’s not part of factory calibration. Depth correction parameters might still be discovered, but until further notice I believe they’re not there.

At last, numbers 1, 2, and 6 are handled, but in a strange way, by being all mashed together. The known calibration data contain coefficients for a third-degree bivariate polynomial that slightly perturbs depth pixel locations. On top of that there is a horizontal pixel shift depending on a depth pixel’s camera-space Z value. Taken together, these parameters can map depth pixels to color pixels non-linearly, accounting for 2D distortion in both cameras in one go. Essentially, plugging a depth pixel and its Z value into the formulas results in a color pixel; in other words, this is a mapping from depth image space into color image space (providing scattered depth measurements in the color image) rather than the more traditional texture mapping from color image space into depth image space. It makes sense for the Kinect’s intended purpose (finding the Z position of objects that have been identified by their color), but it’s backwards for texture-mapping 3D geometry.

That said, the horizontal pixel shift operator assumes that the pixel grids of the color camera and the virtual depth camera are exactly aligned. Because polynomial distortion is applied to depth pixels before the Z-based shift is applied, there is no way to account for rotated pixel grids. That’s definitely not the greatest way of handling it.

Experiments

Now’s the time to do some experiments with the factory calibration. Fortunately, I have a calibration target with very precisely known dimensions (the semi-transparent checkerboard). After adding a factory calibration parser to the Kinect code, and comparing both my own and the factory calibration on the target, here are the results. The target is exactly flat, 17.5″ wide, and 10.5″ tall. Using either calibration data, I measured all four sides of the target using KinectViewer.

| Actual size | My calibration | Factory calibration | |||

|---|---|---|---|---|---|

| Error | Error | ||||

| Top edge | 17.5″ | 17.471″ | -0.166% | 17.771″ | 1.549% |

| Bottom edge | 17.5″ | 17.595″ | 0.543% | 17.911″ | 2.349% |

| Left edge | 10.5″ | 10.524″ | 0.229% | 10.699″ | 1.895% |

| Right edge | 10.5″ | 10.586″ | 0.819% | 10.791″ | 2.771% |

I should mention that my own calibration is a quick&dirty one using only four tie points (it’s exactly the one I created in the calibration tutorial video; I forgot to back up the “real” calibration beforehand — d’oh!). Still, it is already significantly better than the factory one. Both calibrations are skewed towards the lower-right corner of the image (the right and bottom edges are oversized), but the overall RMS error of mine is 0.511%, and the factory’s is 2.190%, more than a factor of 4 worse. This, of course, is assuming that there is no additional depth-related “secret sauce” in the factory calibration data.

Is custom calibration worth the extra effort? That’s for everyone to decide for themselves, but after looking at these numbers, I don’t feel so bad for having spent the calibration time for each of my Kinects. I should also mention that my calibration does not contain depth correction, because that wasn’t part of the tutorial video. My software already supports it, and it should give even better overall results.

Update: I re-did the quick&dirty calibration used in the table above with non-linear depth correction and 12 tie points (to see how far it can be pushed), and the resulting RMS error for the same target, over a range of distances from the Kinect, is 0.188%. That’s not too shabby; a 2m object would be measured with an error of ±3.76mm (with factory calibration, the error would be ±43.8mm).

I haven’t tested color calibration yet because I still have to convert the factory calibration parameters to my software’s way of doing things. But I expect that factory color calibration will be better than mine, because it includes non-linear lens correction, and is the main intended application for the Kinect.

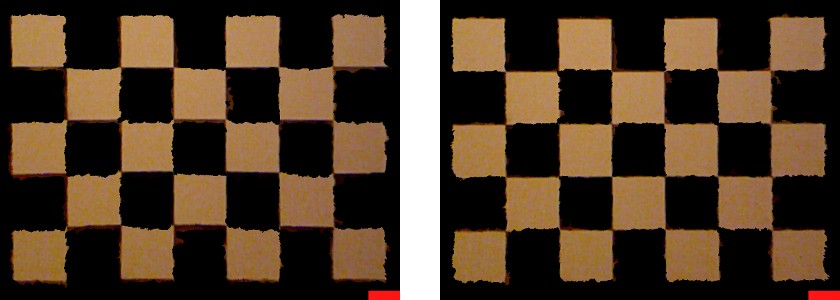

Update: I finished color calibration, and compared factory calibration to my own. I was surprised that factory calibration was slightly worse (see Figure 1). In the factory calibration, the depth image was overall too “low”, meaning that foreground objects had hanging fringes of background color at their bottoms. In my own calibration, color and geometry were very well aligned, even though my software does not do non-linear lens correction. Fortunately, the Kinect camera’s lenses are quite good.

Figure 1: Comparison between factory color calibration (left) and custom color calibration (right). While both are good, foreground objects in the left image have background-colored fringes at their bottoms, indicating that the depth images is generally mapped too low. Alignment is significantly better in the right image.

Yet Another Update: As of version 2.8, my Kinect package has a utility to download factory calibration data from the Kinect (KinectUtil getCalib <camera index>). However, there is one minor issue. My own calibration procedure uses centimeters as world-coordinate units (that’s not hard-coded in, but just the way how I specify the size of the calibration target on the command line), and the KinectViewer application assumes centimeters as world-space units (now that’s hard-coded in). As a result, when using factory calibration data, the Kinect’s image won’t immediately show up centered in the window when starting KinectViewer. One has to zoom out a bit first, most easily by rolling the mouse wheel up about ten clicks, but then everything works as normal.

That great work! I am interesting in Kinect. Can you share this code via my email.

Thanks you so much.

I can’t send the code to you, but you’re welcome to download it from the project web site.

Hi,

I am currently working with matlab to align the RGB with the depth data, and I cannot seem to find the parameters. You said in the beginning of the article that the intrinsic and extrinsic parameters are in the memory of the sensor. My question is how do I access that information.

Thank you

They are a little hard to get. There are USB protocol commands to get the parameters in four chunks. You can look at the source code for my Kinect::Camera class, specifically the getCalibrationParameters() method.

Hi, I am trying to get the comparison between factory calibration and calibration done using your software, can you possibly share the factory calibration parser? I’m the ultimate beginner in c++ and with so many typename and typedefs introduced, it’s really confusing. Should i start with newCalibration.cpp?

You can download the factory calibration data via KinectUtil -getCalib. To download from the first Kinect on your computer, use -getCalib 0. This will create an intrinsic calibration file in the Kinect package’s configuration directory, named after the serial number of your Kinect. The file just contains two 4×4 matrices: The projective transformation from depth image space into 3D camera space, and the projective transformation from 3D camera space into color image space (for texture mapping).

Thank you for the reply! Just to be sure, so that I can parse this right, is the custom calibration done by your software produce the same structure too in the .dat file?

That’s correct, yes. Two 4×4 matrices (depth then color) in row-major order, 64-bit floating point values, little-endian byte order.

Hi okreylos,

I’m trying to implement SARndbox 1.5, with Kinect 2.8 and Vrui 3.1, aparently all is working well, Vrui is OK, used getCalib, and when i run RawKinectViewer i got both images, deph on left, colour on right.

When i run ./bin/KinectViewer -c 0 i got a black image, but trying with programs menus i found “Show streaming dialog” option in floating, and click on “Show from camera” button, and i got my own image when i set about 1 mt and closer.

I try ./bin/SARndbox -h and found help, then i used:

Then i run ./bin/SARndbox -c 0, and got a green textured image on proyector, the Kinect sensor works (the IR sensor is on like when i use RawKinectViewer and KinectViewer, in terminal show 0.155481 x 0.15066.

But nothing happens, i move close and away and have no reaction of software.

I want to know if is possible to get a “trial mode” (and how) to check if software is working well, before build a sandbox, to avoid expend money if this not work. 🙂

– or- if calibration is essential to get software working.

-or- if i missing some thing else.

please help!

thanks!

To test the sandbox software, you can simply point the Kinect at a wall, or down at the floor (floor is better, but you need a tripod or something). That’s how I do it.

But before running SARndbox, you need to perform the essential calibration steps, as explained in the SARndbox README file and on my installation article here. Once you have BoxLayout.txt and ProjectorMatrix.dat files, you run the software as ./bin/SARndbox -uhm HeightColorMap.cpt -fpv, and you can immediately use it.

If you don’t have a projector set up yet, skip the projector calibration step and omit the -fpv (“fixed projector view”) command line argument. You’ll get a regular 3D view of the scanned surface, but everything else will work as normal.

Hi okreylos, Very, VERY FAST response, thanks!.

Then (only to check) at this point, is completely normal to obtain the green textured image from SARndbox program without any interactive response, only after calibration process i’ll can obtain the interactive reaction. is it?.

cheers from Chile!

Yes, if you point the Kinect at a flat surface, the image will be green after calibration because you’re seeing the zero-elevation color. Place a small object into the Kinect’s field of view and leave it still for 1 second, and it will show up.

BTW: If you have further questions, please post a comment in my Build your own Augmented Reality Sandbox article; that way, they are more on-topic and others will have an easier time finding them.

Great, i’ll do that, THANKS !

Awesome work Oliver!

I’m interested in the non-linear depth correction (item 3) you mentioned was not included in the video (you mentioned it in your ‘update’).

Was this done as part of the checkerboard procedure, or with some other calibration tool/procedure of yours.

The depth correction is an optional step before grid-based intrinsic calibration. It works by pointing the Kinect orthogonally at a flat surface from a range of distances and capturing a depth image. The software then fits one plane to each depth image by least-squares fitting, and calculates a linear correction coefficient for each pixel. The coefficients are smoothed and compressed by approximating with a bivariate spline. I need to make a video showing that step.

Sorry, I am new to Kinect, so maybe this questions will be stuid but still..

Does your software work on Windows?

Which drivers should I install, can it be the Microsoft SDK’s or?

And if it work on windows can you tell me how can I install it.

My goal is to do callibration on my Kinect model 1473, so other open source drivers that i find for callibrating don’t work with that model. I can see your software work with that model too, so I want to use it to callibrate my kinect. Is it possible on windows and whit which drivers?

Sorry for my English.

Thanks

Thanks for the very informative post and I’m super glad that somebody spent the time to compare how the factory calibration values compare against manual calibration values.

I was able to download my Kinect’s factory calibration data via your toolbox. Is there a straightforward way to convert the obtained matrices to camera projection matrix (the 3×4 P matrix) for the RGB and the depth camera and also get the transformation matrix (extrinsics) between the two cameras? This would make things a lot easier for my particular application.

Thanks in advance.

Meanwhile, as I was waiting for a reply, I started to work with the data I grabbed a bit to see if I can project known 3D points into the depth image.

I got the color shift stuff sorted out, and I was able to successfully map the color image pixels to depth image pixels (essentially determine what color a particular depth pixel maps to).

I’m now stuck at using the depth unprojection matrix.

By examining lines 362 to 383 of KinectUtil.cpp, I did something like this (pseudo-code):

depthPoint = [double(x) / 640

double(y) / 480

rawDepth / maxDepth

1.0 ];

unprojected = depthUnproject * depthPoint

unprojected = unprojected / unprojected[3]

(Note that rawDepth is the ushort value I get from the depth map image).

However, I get a point cloud that does not resemble anything 🙁

How am I supposed to unproject using the matrix that your code gives me? I’m stomped and have already wasted a full work day on this.

Your help is hugely appreciated : )

Look at the Projector class inside the Kinect library. It generates triangle meshes from depth images, projects them out into 3D camera-fixed space, and texture-maps them with color images.

Pingback: Practical Kinect Calibration » CodeFull