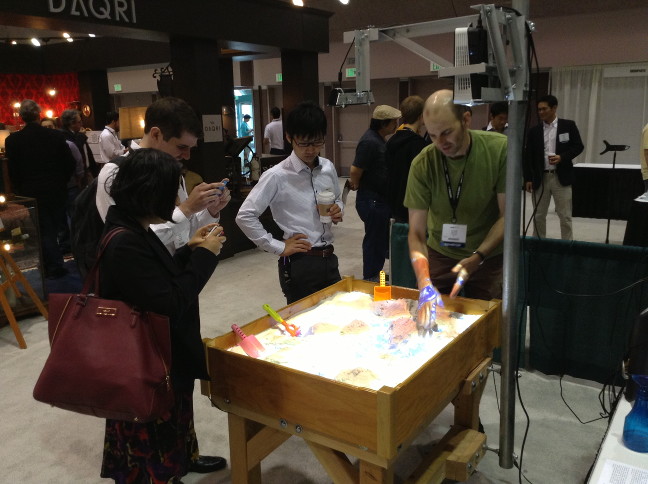

I’ve just returned from the 2013 Augmented World Expo, where we showcased our Augmented Reality Sandbox. This marked the first time we took the sandbox on the road; we had only shown it publicly twice before, during UC Davis‘ annual open house in 2012 and 2013. The first obstacle popped up right from the get-go: the sandbox didn’t fit through the building doors! We had to remove the front door’s center column to get the sandbox out and into the van. And we needed a forklift to get it out of the van at the expo, but fortunately there were pros around.

But after that, things went smoothly. We set up the sandbox on Monday afternoon, and when we turned it on, it just so happened to be already calibrated. I still cannot explain how that was even possible, but sometimes you just have to roll with it. We weren’t so lucky the next morning (showtime!): when we turned it back on, calibration had gone all to hell, and my somewhat frantic efforts to get it back in line didn’t work. But when I unplugged the Kinect and plugged it back into a different USB port, the good calibration was suddenly back.

My best guess is that there was some problem with USB communication between the Kinect and the computer, which caused it to advertise itself with a wrong serial number. As the custom calibration data used by my Kinect code, and the AR Sandbox code by extension, is tied to serial number, the code loaded the wrong intrinsic calibration file (or downloaded the device’s factory calibration, which isn’t all that good), and so there was a mismatch between the intrinsic and extrinsic calibration, and the projection was way off.

While this particular problem fortunately didn’t occur again, we kept having USB trouble throughout the show. The Kinect’s color camera would turn itself off during use, at somewhat random times. Due to the way the AR Sandbox code is set up, the terrain scanning module would still work, meaning that the topographic map was still updated properly, but the rain detection would fail. Even though rain detection is purely based on depth information at the moment, the code still expected to see a color image stream, because the initial method was based on depth and color. So we had to stop the sandbox, reboot the Kinect, and restart the sandbox probably a dozen times each day. Not a huge deal — rebooting and restarting only takes a second — but still annoying and embarrassing.

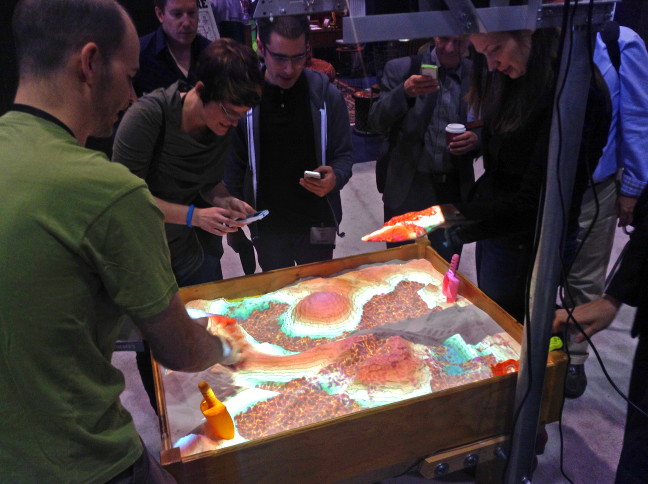

Figure 3: A good-sized crowd, and Lava! Spilling from a volcano! Photo provided by Marshall Millett.

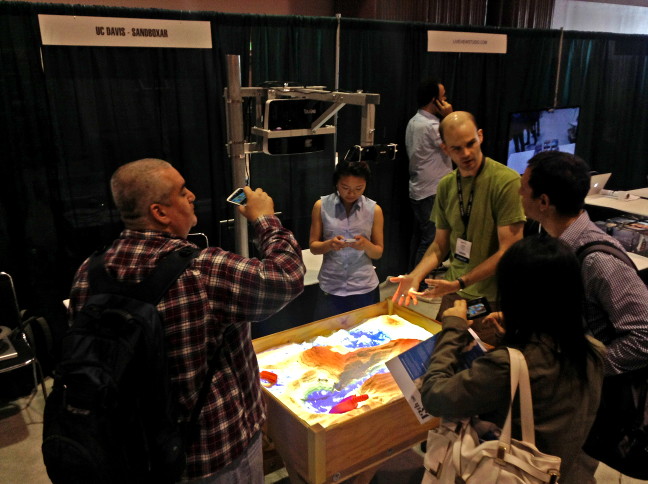

But apart from these minor issues, the sandbox worked great (see Figures). We had a lot of visitors, some of whom stayed for a long time, and we got generally good feedback. Here’s a funny mistake we made, though: we kept emphasizing that the AR Sandbox code is free software. At the end of the first day, a visitor asked us “well, if the software is open source, and the computer hardware is just store-bought, then what’s your intellectual property?” At first I didn’t understand the question, and thought it was about us giving the software away and therefore not “monetizing our IP,” but it turned out he had assumed that someone else had developed the software and made it open source, and we had just downloaded it. That misunderstanding had simply never occurred to me. I wonder how many first-day visitors had walked away with that impression. Oops.

Figure 4: Another one, just ’cause. After about 16 hours total of digging in the sand, I really appreciated how nice our particular type of sand feels. Smooth, cool, and very relaxing. Photo provided by Marshall Millett.

Overall though, I’m calling this a success. We even got to play it fast and loose at several times, and showed experimental things we normally don’t. Some visitors built small volcanoes, and so I went and quickly changed the shader code to turn the water into lava, to great amusement (see Figure 3). Fortunately, the code is set up such that a change to a shader affects the running sandbox immediately, without having to restart it. We also played with an AR toy from a neighboring booth, Sphero, a little spherical robot that can be controlled by a smartphone app. We built a racetrack of sorts in the sandbox, filled it with a little bit of water, and tried racing Sphero around the track, seeing it pushing the virtual water out of the way in a surprisingly realistic manner (for that effect, I turned off the normal 1-second delay built into the topographic scanning code). Unfortunately Sphero sank into the sand a little and got stuck all the time, so we had to push it along a lot, but it was still fun.

The reality of Augmented Reality

With my report on the AR Sandbox out of the way, on to the rest of the expo, and the whole wide world of augmented reality in general. Being a VR researcher (of sorts) rather than an AR researcher, I was quite unfamiliar with what’s going on in that field, and this was the first time I got an overview what AR is really all about, and I feel I can now address some questions that I’ve had, or that others have asked me in the past.

What about Google Glass?

I had a longish discussion about Google Glass with a few friends a while ago, and their opinion was that it will never take off because it just looks too dorky. Now that I’ve seen a bunch of people running around with it up close, I can confirm that yes, indeed, it looks dorky. However, here’s something else that looks dorky: Bluetooth headsets. And we all know those never took off. The Google Glass killer app is pretty clear to me: hands-free text messaging, and navigation (either in the car or on foot) using a heads-up display. Make those, and the rest will be history. And then there will be legislation banning the use of Google Glass while driving, but by then it’ll be too late.

Is AR more than a novelty?

That’s tough to answer, as the field still appears to be in its infancy. But for most of the applications of AR that were actually showcased at the expo, I have to ask: what’s the point? For example, take the AR pool table. Similar in concept to the AR Sandbox, it’s a real pool table with a (2D) camera and projector overhead. The projector predicts shots in real time, with the primary goal to teach the physics of pool, and in concept, and at first glance, it’s awesome. But here’s the thing: when I tried playing with it, I had a much harder time than with a normal pool table (didn’t make a single pocket). The issue was that the augmentation was just off enough to be more of a hindrance than a help. When carefully aiming over the cue stick, the projected ball trajectory would be off by maybe a few degrees, and the conflicting visual cues formed by the “real” sight image and the augmentation threw me off every time (at least that’s my story, and I’m sticking to it). While that’s purely a technical limitation, the serious question is: how good does the technology have to become before it’s actually helpful, and how long is that going to take? Is AR making the same mistakes VR made two decades ago by wildly overselling its own capabilities?

Another, more fundamental, example was a booth specializing in what I gather are “canonical” AR applications: have some physical gadget, point a smartphone or tablet at it, and get a 3D image or animation, or additional information. For example, they had a selection of printed pages, and the user was to point the smartphone at the page, hold it steady for a few seconds for the engine to recognize the page, and then overlaid 3D images with hotspots for interaction would appear. For example, in a furniture catalog page, after initial recognition succeeded, pointing the camera at a picture of a piece of furniture would bring up an “add to cart” dialog. This was technically very impressive, but the question remains: is the AR approach really better, easier, or more effective than downloading a virtual catalog, or going to a live web site, and using tried and true touchscreen interactions to tap on pictures to get a “buy me” dialog? Do I really have to hold a camera phone steady with outstretched arms, and aim with my whole upper body at a small picture on a printed page to do the same? Even if the augmentation weren’t all jittery? Or would I rather just go directly to Amazon.com? Even better, wouldn’t I rather point my smartphone at a QR code, and then get taken automatically to Amazon.com and its regular 2D interface?

It’s the same beef I have with the Minority Report interface (and with the Leap Motion, to some extent): if the task is entirely two-dimensional, a three-dimensional interface doesn’t help, it hurts (often literally). Now, superficially, we’re doing the same thing in VR. We’re using hand-held input devices, and force users to use their bodies to interact with a computer system. But the difference is that we’re doing this (or at least should be doing it) for tasks that are naturally three-dimensional, such as 3D modeling, molecular design, 3D visualization, etc., where there is a clear and demonstrated benefit to using 3D interactions. Meaning that even if it does get uncomfortable (it usually doesn’t, long story), the benefits still outweigh the downsides. If someone were to propose to me a VR version of a web browser or text editor, I’d tell them they’re insane.

There were more examples like the AR catalog, and they all had one thing in common: be it a physical cube that turns into a virtual digital clock or weather monitor when viewed through a smartphone app, or a printed page that shows additional media, all these things are easier, faster, and more comfortable without AR. Even the showcased virtual anatomy lesson, which is a 3D task, would have been better without AR, but that’s more due to a poor implementation of the necessary user interface than the fundamentals.

Mind you, I’m not saying that AR is useless in principle. There are clearly useful applications, and several were showcased. I already mentioned heads-up navigation, I saw an AR welding trainer, and there was the LEGO “virtual box” I had seen “live” in a LEGO store before. Let’s analyze why this one works. The virtual box attacks a clearly defined problem: before spending big bucks on a LEGO set in the store, you want to see what the finished model will look like. As LEGO is inherently 3D, a standard 2D picture on the box won’t do it justice. So you have the box in your hands, and you walk up to a camera station, at which point the AR system embeds a 3D rendering of the built model into the live camera image and shows it on a monitor. So far, so good. But the monitor image is still 2D. As we all know, to understand 3D shape from a (monoscopic) 2D picture, you need motion parallax, i.e., you need to move and rotate the view. The virtual box makes this easy: instead of using an indirect interface, say using a joystick or mouse or touchscreen, you simply move and rotate the physical box. Because the model’s 3D rendering is locked to the box, this works completely intuitively. An important detail is that instead of holding a camera/screen combination and walking around a fixed real object, you are manipulating an object you are already holding in your hands in front of a fixed camera. And because now a 3D interface is used to accomplish a 3D task, the whole thing works and has a practical benefit over non-AR approaches. The same is true for navigation in the real 3D world and difficult three-dimensional tasks like welding, but it’s decidedly not true for checking the time or the current weather, because a clock, or a cloud icon with a temperature label, are fundamentally 2D.

So I think the bottom line here is to learn the same truth VR had to learn: instead of coasting on novelty and gadgetry, understand the core strength of your technology, and then exploit that strength as much as you can. At all times, ask yourself this: am I applying an AR (or VR) solution to a problem just because I happen to be an AR (or VR) researcher/company, or am I doing it because the solution is truly better than the alternatives?

Pingback: Projektive AR im Sandkasten | 3D/VR/AR

Pingback: Augmented World Expo 2013: It’s a wrap! | UgoTrade

Pingback: AR Sandbox at Augmented World Expo | Doc-Ok.org