Here is an interesting innovation: the developers at Cloudhead Games, who are working on The Gallery: Six Elements, a game/experience created for HMDs from the ground up, encountered motion sickness problems due to explicit viewpoint rotation when using analog sticks on game controllers, and came up with a creative approach to mitigate it: instead of rotating the view smoothly, as conventional wisdom would suggest, they rotate the view discretely, in relatively large increments (around 30°). And apparently, it works. What do you know. In their explanation, they refer to the way dancers keep themselves from getting dizzy during pirouettes by fixing their head in one direction while their bodies spin, and then rapidly whipping their heads around back to the original direction. But watch them explain and demonstrate it themselves. Funny thing is, I knew that thing about ice dancers, but never thought to apply it to viewpoint rotation in VR.

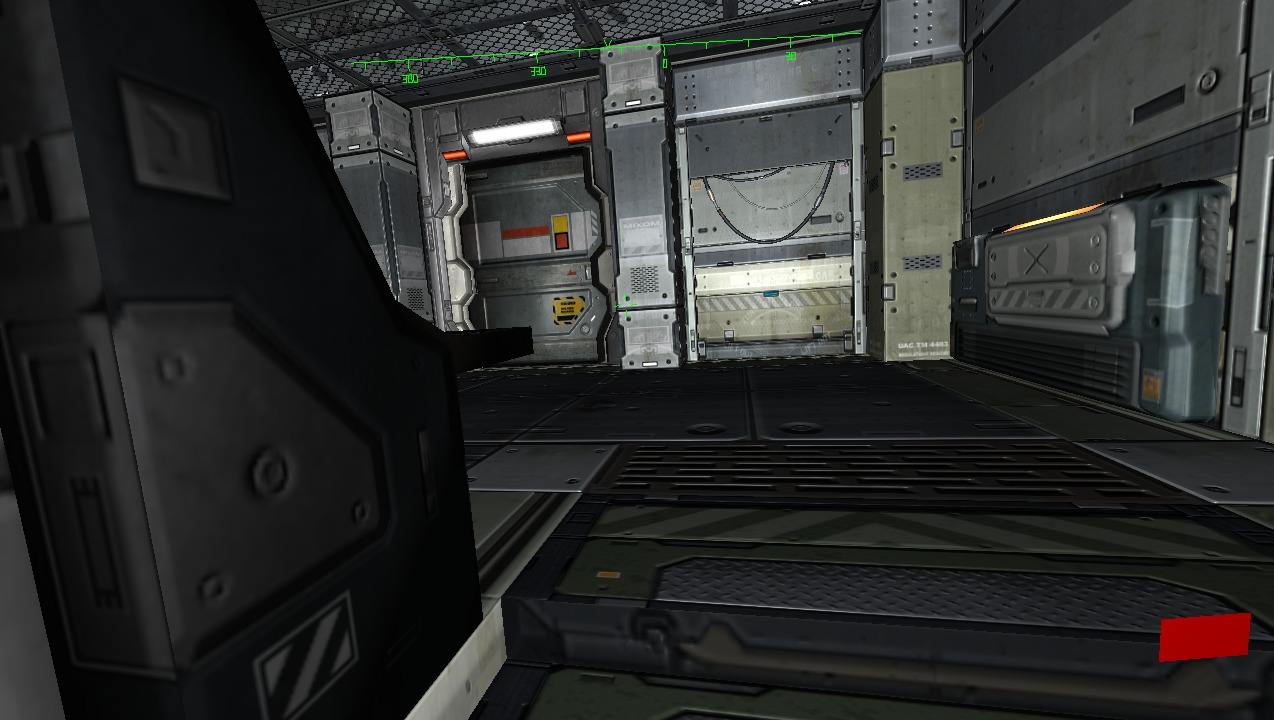

Figure 1: A still from the video showing the initial implementation of “VR Comfort Mode” in Vrui.

This is very timely, because I have recently been involved in an ongoing discussion about input devices for VR, and how they should be handled by software, and how there should not be a hardware standard but a middleware standard, and yadda yadda yadda. So I have been talking up Vrui‘s input model quite a bit, and now is the time to put up or shut up, and show how it can handle some new idea like this.

Vrui has a large number of navigation metaphor plug-ins, and several of those use controller-based viewpoint rotation and could potentially benefit from this new “VR Comfort Mode” (I wonder if that moniker is going to stick now). But to keep things interesting, I didn’t implement it exactly the same way Cloudhead Games did — they replaced smooth motion using an analog stick with discrete steps every time the stick is pushed to either extreme — but instead implemented it for the canonical “mouse look + WASD” navigation method from first-person games. This added several interesting wrinkles.

For one, with mouse look there is no discrete button-like event to initiate a discrete turn; instead, smooth motions to the left or right need to be translated into a sequence of discrete steps, and, very importantly, the total angle of rotation after stepping must match the total angle of rotation imposed by the user, or experienced players who can make precise turns from muscle memory would be very upset. The second wrinkle is mouse aim. When there is no 6-DOF input device for free aiming, and players do not want aiming reticles that are glued to their faces, it still must be possible to point the reticle in any arbitrary direction, even if viewpoint rotation itself is quantized. And if there is some form of compass HUD indicating viewing or pointing direction, that has to work seamlessly as well.

Turns out, all this was a piece of cake. It only took five lines of code or so in Vrui’s FPSNavigationTool class to enable quantized azimuth rotation with freely configurable quantization steps, and due to Vrui’s architecture, it now works across all Vrui applications. For posterity’s sake, I recorded myself trying it for the first time in the Rift after compiling the modified tool for the first time:

And here is how I did it: the FPSNavigationTool class, like all other tool classes derived from SurfaceNavigationTool, applies azimuth rotation by rotating physical space around the local “up” direction by the azimuth angle selected by the tool. Now, instead of applying azimuth angle directly, I quantize it using the simple qAzimuth = floor(azimuth/step + 0.5)*step formula. That’s all it takes; the only thing left is to correct the direction of the aiming reticle and compass HUD by applying a correction rotation of azimuth-qAzimuth around the Z axis during HUD rendering, and tada.

My first impression is that this new method works. I don’t suffer noticeably from rotation-induced motion sickness, but I did feel a difference. What remains now is to implement the same minor change in the other navigation tools, where applicable, and test quantized rotation on as many people as possible.

I saw the video by CloudHead Games, the logic makes total sense, but looking at a video I have to say it was hard think it would actually feel ok. But as you said, gotta believe in those guys 😛 And you seem fine with it too!

The mouse solution looks very interesting indeed, I am curious to test a feature like this out myself. I think the only negative I can immediately see… is that let’s play videos will be horrible to watch 😡 I guess game recording people might have to skip comfort mode 😉

Would flashing the screen black briefly (like the length required for change blindness to kick in) between the jumps help or make things worse?

Watching your video it felt a bit jarring for me to have to figure out where everything went each time the view jumped; i’m not sure if the angle is just too much for me, or if it’s just that i didn’t know where to expect things to go since i wasn’t controlling the view myself.

I noticed the same thing watching the video, but with the HMD it wasn’t a problem. It might be the combination of head tracking and stereo that makes this work.

Btw, i think it might work better if the “jump borders” were recentered after each jump, so that if you don’t have the view flickering back and forth if you’re looking at the direction close to the last jump.

Something i just thought of now; instead of discrete steps, for analog sticks and such, perhaps a literal ratchet behavior (only counting the movements away from the center position, ignoring movements towards the center), without that cumulative effect (speed of virtual motion equals speed of physical motion, and not proportional to the deflection of the stick like how it’s usually done), the 1:1 feeling of turning could be returned to the user without discretizing turning too much?

I tried it at GDC but was not particularly convinced.. I didn’t test it long enough to see if I was less sick (I’m very sensitive!) but it totally broke the presence for me ..

I think it might be one of these counter-intuitive things that work for some people and not for others. It might be that you and I are in the second group because we’ve been doing VR for so long. Cloudhead Games probably tried it mostly on new VR users. I did notice a difference, but like I said, it wasn’t much of a problem for me before.

As long as software has the option to do it, those people for whom it is an improvement can use it, and the rest of us can do more research. 🙂

Would it be possible to add these lines of code somewhere in the hydra.cfg to apply this to the hydras joystick or would more things have to be re-written?

I currently only made the change to the FPS navigation tool, the one that’s enabled by pressing Q, and then uses W, A, S, D and the mouse to move around. The other obvious candidate is the tool that uses an analog axis to rotate, and I would implement that in the same way as the Cloudhead Games people.