I’ve recently realized that I should urgently write about LiDAR Viewer, a Vrui-based interactive visualization application for massive-scale LiDAR (Light Detection and Ranging, essentially 3D laser scanning writ large) data.

Figure 1: Photo of a user viewing, and extracting features from, an aerial LiDAR scan of the Cosumnes River area in central California in a CAVE.

I’ve also realized, after going to the ILMF ’13 meeting, that I need to make a new video about LiDAR Viewer, demonstrating the rendering capabilities of the current and upcoming versions. This occurred to me when the movie I showed during my talk had a copyright notice from 2006(!) on it.

So here I am killing two birds with one stone: Meet the LiDAR Viewer!

We recently started a flood risk visualization project using high-resolution LiDAR surveys of the southern San Francisco bay, and while downloading a large number of LiDAR tiles through the (awful!) USGS CLICK interface — compare and contrast it with the similarly aimed, yet orders of magnitude better, Cal-Atlas Imagery Download Tool — I accidentally found a large data set covering downtown San Francisco (or, rather, all of San Francisco, and then some). Tall buildings and city streets are a lot more interesting than marshes and sandbanks, and so I decided to assemble the best possible 3D model of downtown San Francisco I could, based on available public domain LiDAR data and aerial photography, and show off LiDAR Viewer with it. The San Francisco LiDAR survey, flown in 2010, was co-sponsored by the USGS and San Francisco State University, towards goals of the American Recovery and Reinvestment Act (see the ARRA Golden Gate LiDAR Project Webpage for more details). The aerial photography is of unknown provenance and vintage, but was available for download as part of the “HiRes Urban Imagery” collection at Cal-Atlas. Based on the captured states of ongoing construction in San Francisco, I’m estimating the imagery was collected between 2007 and 2009. If someone out there knows details, please tell me.

In total, I downloaded about 22 GB of LiDAR data in LAS format, and about 9 GB of aerial color imagery in GeoTIFF format. The former through CLICK (ugh!), the latter through Cal-Atlas (aah!). That provided me with 832 million 3D points, and 3.2 billion color pixels to map onto them. Using LiDAR Viewer’s pre-processor, it took 19 minutes to convert the LAS files into a single LiDAR octree, and then 7.5 minutes to map the color images onto the points. Finally, it took 40 minutes calculating the per-point normal vector information required for real-time illumination and splat rendering in LiDAR Viewer. For comparison, it took three days to download the LAS and GeoTIFF files (I did this on my computer at home, on AT&T DSL, not in my lab).

Talking in detail about LiDAR Viewer’s underlying technology here would be redundant — just go to the web site — but in a nutshell, LiDAR Viewer supports seamless exploration of extremely large, both spatially (hundreds of square kilometers) and in data size (Terabytes), data sets using out-of-core, multiresolution, view-dependent rendering techniques. Basically, it does for 3D point cloud data what Google Maps does for 2D imagery, and Google Earth does for global topography (or, for the VR-inclined, replace Google Maps with Image Viewer and Google Earth with Crusta).

Like all Vrui-based software, LiDAR Viewer is primarily aimed at holographic display environments with natural 3D interaction, i.e., CAVEs or fully-tracked head-mounted displays, or head-tracked 3D TVs. But I decided to use the desktop configuration of LiDAR Viewer for this video; for one, because I was doing this from home; second, to drive home the point that Vrui software on the desktop is used in pretty much exactly the same way as native desktop software, and that Vrui’s desktop mode is fully usable, and not a debugging-only afterthought like in other VR toolkits that shall remain unnamed. And third, because I really wanted to show off the awesome San Francisco scan, and it would have suffered if filmed with a video camera off a large-scale projection screen. And fourth, because I wanted to make the first 1 1/2 minutes of the video look as if the data were completely 2D, just to mess with people. 😉

So what are the new features in LiDAR Viewer? I’m still working towards version 3.0, which will address the two fundamental flaws of version 2.x: limited precision and pixel rendering.

Limited precision stems from LiDAR Viewer’s representation of point coordinates as 32-bit IEEE floating-point numbers. While floats can represent values between about -1038 and +1038, they only have around 7 decimal digits of precision. That sounds like a lot, and isn’t a problem for terrestrial LiDAR scans where spatial extents are usually in the hundreds of meters, and which are often not geo-referenced. But airborne LiDAR are typically in UTM coordinates, where huge offset values are added to point coordinates. If a data set has 1mm natural accuracy and an extent of +-100 meters, but 4 million meters are added to all y coordinates, the accuracy suddenly drops to around 1m. That is clearly not acceptable. LiDAR Viewer 2.x employs a workaround that transparently removes these offset values, but with very large surveys covering hundreds of square kilometers, loss of accuracy still begins to creep in towards the edges of the data. While the use of IEEE floats is inexcusable in hindsight, LiDAR Viewer’s development started in 2004, when this problem wasn’t even on the horizon.

LiDAR Viewer 3.0 will solve the problem for good by using a multiresolution coordinate representation, which virtually allocates 80 bit for each coordinate, while only really storing 16 bits for each coordinate for each point. 80 bits of precision would allow mapping the entire solar system at 7.5 picometer resolution, which is of course ridiculous. I chose 80 bits because it happens to be the combination of a 64-bit implicit prefix and a 16-bit explicit suffix; I’m not expecting that the range would ever be used. But then who knows. As a side effect, moving to a multiresolution representation will immediately shrink file sizes by one half.

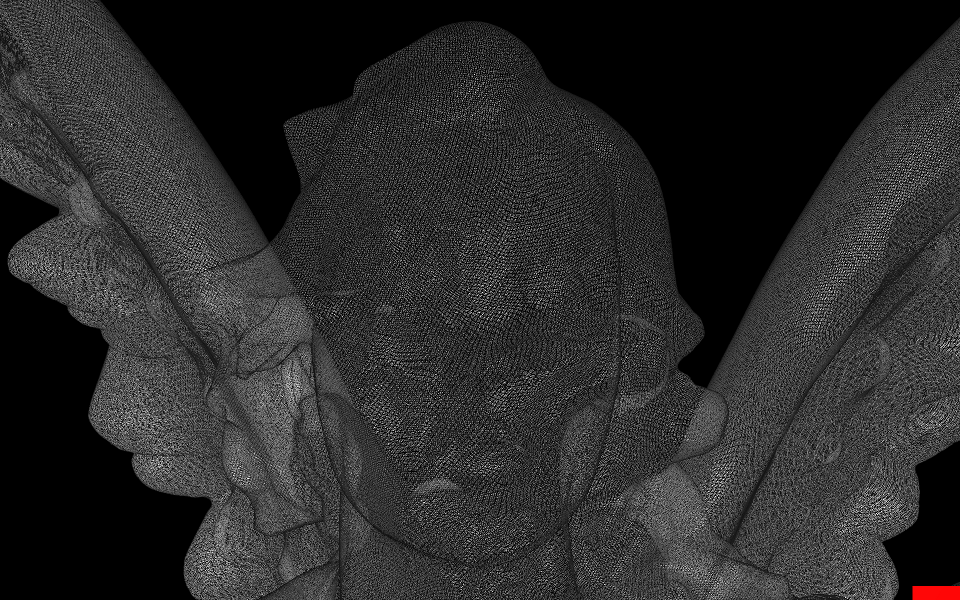

The second issue is pixel rendering, which becomes a problem when zooming into LiDAR data beyond the scale supported by the data’s density. In other words, when looking at a point cloud from far away, the individual pixels will fuse and form a continuous surface, but when looking close up, the solid surface will dissolve (see Figures 2 and 3). On the one hand, one probably shouldn’t look at data at zoom factors not supported by the data, but on the other hand, everyone always does. LiDAR Viewer will use a splat renderer that draws individual points as surface-aligned shaded disks instead of pixels. Because the disks’ radii are defined in model space, they will fuse no matter the zoom factor. Figures 2-5 show an example comparing LiDAR Viewer 2.x’s point rendering with a preview of LiDAR Viewer 3.0’s splat rendering.

Figure 2: A pixel-rendered view of a high-resolution 3D laser scan of a statue (“Lucy”). At this viewing distance, the individual pixels are close enough to fuse into a solid surface.

Figure 3: A pixel-rendered view of a high-resolution 3D laser scan of a statue (“Lucy”). At this viewing distance, individual pixels separate and the previously solid surface dissolves into a cloud of points, prohibiting perception of the 3D shape.

Figure 4: A splat-rendered view of a high-resolution 3D laser scan of a statue (“Lucy”). From the exact same viewing position as in Figure 3, the model-space scaled splats still fuse to create a solid surface. Because the zoom factor is beyond the level supported by the scan’s density, individual splats can be discerned.

Figure 5: Splat-rendered view of a high-resolution 3D laser scan of a statue (“Lucy”). As splat sizes scale with zoom factor, they still fuse into a solid surface even at this high magnification. Individual 3D points can be distinguished clearly.

The current splat renderer is only an experimental implementation and a work in progress, but it is good enough that I felt comfortable showing it off in the video, sort of as a “coming attractions” teaser. At least the scientist users of LiDAR Viewer are very excited about it.

Pingback: On the road for VR (sort of…): ILMF ’13, Denver, CO | Doc-Ok.org

Pingback: Immersive visualization for archaeological site preservation | Doc-Ok.org

Mindblowing. I know exactly what you mean by “messing with people” in the first minutes. When you flipped the view on its ear, my jaw dropped.

I googled lidar after the video, and came across a “lidar news”. I emailed the editor your video. There was nothing like it there.

Will you make a video showing new lidar viewer in the cave?

I’ll get around to it at some point, but right now I have a lot of other stuff on the plate.

And thanks for falling right into my trap. 🙂 That’s the reaction I was going for.

Pingback: UC-Davis LiDAR Viewer | Weather in the City

Pingback: LiDAR Viewer - LiDAR News

Pingback: Meet the LiDAR Viewer | Doc-Ok.org | TIG | Scoop.it

The splat rendering is extremely exiting for a non-scientist too. Especially when you’re dealing with aerial and other not so dense scans. You are a hero and I would trade my mother for that San Francisco scan. What do you say?

No need to trade in your mum; the San Francisco scan is public domain. You can get it through the USGS Click interface by searching for all point cloud data covering the larger San Francisco area. For more information, go to the ARRA project page I’m linking in the post.

Oh good, cause one day I just might have regretted it. What kind of procedure/software do you use to project geo TIFF colours onto the lidar data?

If the LiDAR data and color imagery are in the same coordinate system/map projection, you just run LidarColorMapper <LiDAR octree name> Color <image name 1> … <image name n>. If your images are geotiffs, you need to extract the world information from the images using listgeo first. Each.tiff file needs an accompanying .tfw file in the same directory.

Great, will give it a go.

Not sure if this is the place to post, but thought I might share this.

I just used the selection tool and export selected points in Lidar viewer to cut away some targets and noise out of a scan. Using the oculus and hydra this was a very nice experience, a bit like gardening work pulling up weeds.

I was however curious to know if there’s a more intelligent way of doing it. What I did was this:

-open the scan and pick the 6dof locator

-select the entire scan with one click, zoomed out

-go inside the environment and deselect the undesired points

-export selected points as .xyzuvwRGB

-convert this using Lastools txt2las back to las,

exporting only xyzRGB values

I guess this is a bit of a workaround. Perhaps it’d be better to just select and export unwanted points and subtract these from the original dataset? Still this worked very nicely.

Typical workflow:

– Select undesired points in LiDAR Viewer.

– Export selected points (as xyz, xyzrgb, or xyzuvwrgb, doesn’t matter)

– Remove undesired points via LidarSubtractor.

Ok, I’m realizing I should RTFM before asking more questions 🙂

I forget right now if I’m linking to it from within the software package, but there’s a rather detailed LiDAR Viewer manual at http://keckcaves.org/software/lidarviewer

Yes, I have it since earlier. Thanks!

Yes, the LidarSubtractor is really the way to go.. Great tool. And doing this multiple times doesn’t degrade things right?

That’s correct, as long as you don’t specify a custom point offset on the command line. In that case the point offset is passed through transparently, and the remaining points will be binary identical to the original points. If you do specify an offset, there might be some degradation.

I understand. Damn you’re up late. You’ve got mail!

Pingback: VR Movies | Doc-Ok.org

I am looking forward to version 3! Those new features are really very interesting!

Btw, am trying to use the FPSNavigationTool in LidarViewer. It seems that it doesn’t know about the coordinate system of the data (ok, I never specified it, so it can’t really know), it just makes me feel like a 40 meter tall giant. Is there a way to fix this?

And finally, is it possible that Lidar files get corrupted over time? I’m having issues with a large dataset that worked fine last week, but now it is constantly crashing LidarViewer. It’s not the first time this is happening, I fixed it earlier for a smaller dataset by redoing the preprocessing stage. I don’t really understand it because LidarViewer does not seem to alter the Lidar files.

To specify scale, you create a text file called “Unit” inside the base LiDAR directory (the one that contains the Points and Index files). Into that, you put the unit name and scale factor. For example, if your measurements are in meters, you would put in “1.0 meter”. If your measurements are in centimeters, you could either put in “1.0 centimeter”, in which case LiDAR Viewer would report measurements in centimeters, or “0.01 meter”, in which case it would report meters. Afterwards you can use the scale bar to select real-world magnification/minification scales, for example go to 1:1 to explore a data set by walking.

Sorry to hear about your file problems. That should not happen. LidarViewer does indeed not change its input files. Check the modification time stamps on those files to see if they were modified by something else, maybe a virus checker or a backup process. Just a wild guess.

What type of LiDAR data is it? Terrestrial or airborne? There’s a known problem with terrestrial data; some scanners create a bogus (0, 0, 0) point for invalid point returns, and then LidarPreprocessor sometimes encounters thousands of those points and creates a bad LiDAR file.

Thanks for you reply.

It is airborne LiDAR in a national coordinate system with typically 8 digit coordinates.

Timestamps on the files are unchanged, I also checked the md5 sum with an earlier copy, and it is identical. So it can’t really be the file after all. It is hard to reproduce exactly when it crashes, it seems random. I get either one of these errors:

libc++abi.dylib: terminating with uncaught exception of type IO::File::Error: IO::StandardFile: Fatal error 22 while reading from file

Abort trap: 6

or

Segmentation fault: 11

that’s using a homebrew compiled version on Mavericks.

I had regular crashes for a while. Turned out I had a too long USB extension cable for the hydra, zero crashes since I got rid of it. This is probably not what’s happening to you but perhaps this can be of help to someone else. It was a 5m USB extension with a signal amplifier.

I’ve just run into the same problem myself — Vrui’s Hydra device driver crashed out at random times. In my case it was because I had the Hydra plugged into a USB port in my monitor, and apparently the Hydra doesn’t like being connected through a USB hub, or over an extra-long cable.

Error 22 means “invalid argument”, which means that there’s something wrong with LiDAR Viewer. And because it’s an uncaught exception, it probably happens in the background thread that loads data from disk into memory. This is not good; it will be hard to pin-point the bug and fix it. What are the precise versions of Vrui and LiDAR Viewer that you installed?

I’m using the latest versions from github:

Vrui 3.1-002

LidarViewer 2.12

Oliver, were you using something like 5m or less when this happened? I was gonna get a 2-3m cable but would be nice to know if I can expect the same thing.

Re-read your post, throgh the monitor you say… I’m gonna give 2-3m a go just to make sure, I feel too tied to the computer as is.

Oliver, have you yet run LiDAR Viewer using the Rift DK2?

If not do you plan on adding DK2 functionality to LV and

VRUI applications in general in the foreseeable future?

Best regards,

Caspar

does this viewer support windows?

Unfortunately, it does not.

Really fascinating! I wanted to try it on my machine but unfortunately not a Mac user…do you think I can run it on Ubuntu? Some tweaks would do it or will it be a major haul?

The software is developed on Linux, and it’s its primary running environment.

Hello,

In playing with this software I get an error when trying to map tiff color images onto .lidar octtree.

I downloaded the Gtiff’s with QGIS from source: tileMatrixSet=nltilingschema&crs=EPSG:28992&layers=luchtfoto&styles=&format=image/jpeg&url=http://geodata1.nationaalgeoregister.nl/luchtfoto/wmts/1.0.0/WMTSCapabilities.xml

Then Layers, Save As, Rendered Image (also tried the RAW format but they both give the same error) and made the .tfw files with listgeo.

:~$ LidarColorMapper /home/marco/Public/LIDAR/Alkmaar2011_AHN2.lidar Color /home/marco/Public/GTiff/1.tif

terminate called after throwing an instance of ‘std::runtime_error’

what(): Images::getImageFileSize: unknown extension in image file name “/home/marco/Public/GTiff/1.tif”

Aborted (core dumped)

is there something I am doing wrong?

Found that my system did not have libTiff installed. Hence it was missing from the compiled program. yeah,… that’ll be what I did wrong.