With apologies to Edsger W. Dijkstra (and pretty much everyone else).

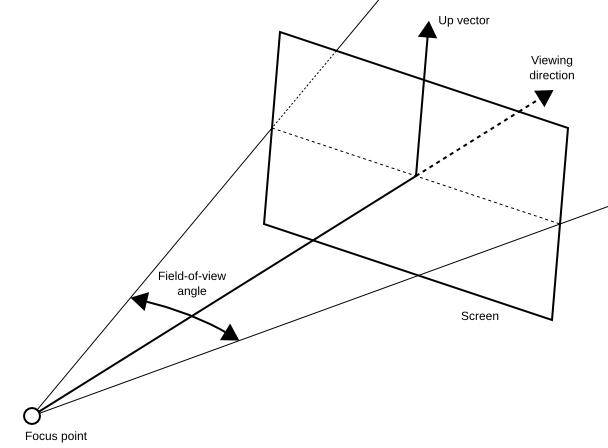

So what’s wrong with the canonical 3D graphics camera model? To recap, in this model a camera is defined by a focus point (the “eye”), a viewing direction, an “up” vector, a screen aspect ratio, and a field-of-view angle (“fov”) (see Figure 1). Throw in a near- and far-plane distance, and together these parameters uniquely define a viewing frustum in model space, and hence the modelview and projection matrices required to render a view of a 3D scene. Nothing wrong with that, per se.

Figure 1: The standard 3D graphics camera model, defined by a focus point position, viewing direction, “up” vector, and screen aspect ratio (ratio of screen width to screen height, not shown in diagram).

The problem arises when this same camera model is applied to (semi-) immersive environments, such as when one wants to adapt an existing graphics package or game engine to, say, a 3D TV or a head-mounted display with only minimal changes. There are two main problems with that: for one, the standard camera model does not support proper stereo image generation, leading to 3D vision problems, eye strain, and discomfort (but that’s a topic for another post).

The problem I want to discuss here is the implicit link between the camera model and viewpoint navigation. In this context, viewpoint navigation is the mechanism by which a 3D graphics application represents the viewer moving through the virtual 3D environment. For example, in a typical first-person video game, the player will be represented in the game world as some kind of avatar, and the camera model will be attached to that avatar’s head. The game engine will provide some mechanism for the player to directly control the position and viewing direction of the avatar, and therefore the camera, in the virtual world (this could be the just-as-canonical WASD+mouse navigation metaphor, or something else). But no matter the details, the bottom line is that the game engine is always in complete control of the player avatar’s — and the camera’s — position and orientation in the game world.

But in immersive display environments, where the user’s head is tracked inside some tracking volume, this is no longer true. In such environments, there are two ways to change the camera position: using the normal navigation metaphor, or simply physically moving inside the tracking volume. Obviously, the game engine has no way to control the latter (short of tethers or an electric shock collar). The problem is that the standard camera model, or rather its implementation in common graphics engines, does not account for this separation.

This is not really a technical problem, as there are ways to manipulate the camera model to yield the correct end result, but it’s a thinking problem. Having to think inside this ill-fitting camera model causes real headaches for developers, who will be running into lots of detail problems and edge cases when trying to implement correct camera behavior in immersive environments.

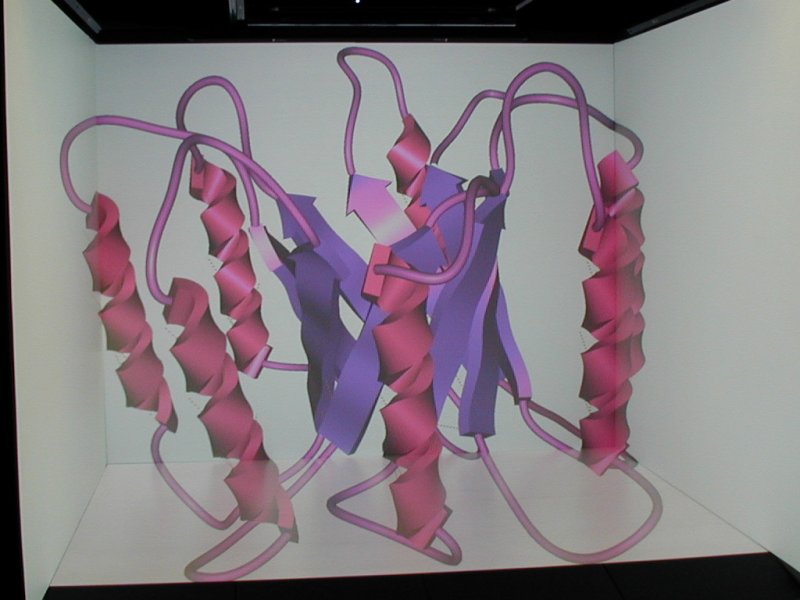

My preferred approach is to use an entirely different way to think about navigation in virtual worlds, and the camera model that directly follows from it. In this paradigm, a display system is not represented by a virtual camera, but by a physical display environment consisting of a collection of screens and viewers. For example, a typical desktop system would consist of a single screen (the actual monitor), and a single viewer (the user) sitting in a fixed position in front of it (fixed because typical desktop systems have no way to detect the viewer’s actual position). At the other extreme, a CAVE environment would consist of four to six large screens in fixed positions forming a cube, and a viewer whose position and orientation is measured in real time by a head tracking system (see Figure 2). The beauty is that this simple environment model is extremely flexible; it can support any number of screens in arbitrary positions, including moving screens, and any number of fixed or tracked viewers. It can support non-rectangular screens without problems (but that’s a topic for another post), and non-flat screens can be tesselated to a desired precision. So far, I have not found a single concrete display environment that cannot be described by this model, or at least approximated to arbitrary precision.

Figure 2: Photo of a CAVE environment consisting of four screens (three walls and one floor) and one viewer (in this case a camera on a tripod). Note how the image of the 3D protein model in the CAVE spans all four screens, but still appears seamless to the camera.

In more detail, a screen is defined by its position, orientation, width, and height (let’s ignore non-rectangular screens for now). A viewer, on the other hand, is solely defined by the position of its two eyes (two eyes instead of one to support proper stereo; sorry, spiders and Martians need not apply). All screens and viewers forming one display environment are defined in the same coordinate system, called physical space because it refers to real-world entities, namely display screens and users.

How does this environment model affect navigation? Instead of moving a virtual camera through virtual space, navigation now moves an entire environment, i.e., a collection of any number of screens and viewers, through the virtual space, still under complete program control (fans of Dr Who are free to call it the “Tardis model”). Additionally, any viewers can freely move through the environment, at least if they’re head-tracked, and this part is not under program control. From a mathematical point of view, this means that viewers can freely walk through physical space, whereas physical space as a whole is mapped into the virtual world by the graphics engine, effected by the so-called navigation transformation.

At first glance, it seems that this model does nothing but add another intermediate coordinate system and is therefore superfluous (and mathematically speaking, that’s true), but in my experience, this model makes it a lot more straightforward to think about navigation and user motion in immersive environments, and therefore makes it easier to develop novel and correct navigation metaphors that work in all circumstances. The fact that it treats all possible display environments from desktops to HMDs and CAVEs in a unified way is just a welcome bonus.

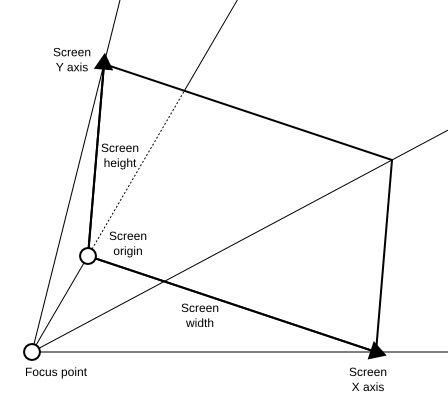

The really neat effect of this environment model is that it directly implies a camera model as well (see Figure 3). Using the standard model, it is quite a tricky prospect to maintain the collection of virtual cameras that are required to render to a multi-screen environment, and ensure that they correspond to desired viewpoint changes in the virtual world, and the viewer’s motion inside the display environment. Using the viewer/screen model, there is no extra camera model to maintain. It turns out that a viewing frustum is also uniquely identified by a combination of a flat (rectangular) screen, a focus point position, and near- and far-plane distances. However, the first two components are directly provided by the environment model, and the latter two parameters can be chosen more or less arbitrarily. As a result, the screen/viewer model has no free parameters to define a viewing frustum besides the two plane distances, and the resulting viewing frusta will always lead to correct projections, which are also automatically seamless across multiple screens (but that’s a topic for another post).

Figure 3: The screen/viewer camera model defined by the position, orientation, and size of a screen, and the position of a focus point, in some 3D coordinate system. Besides the additional near- and far-plane distances, the model has no free parameters besides those that can be measured directly via calibration and head tracking.

Looking at it mathematically again, one screen/viewer pair uniquely defines a viewing frustum in the physical coordinate space in which the screen and viewer are defined, and hence a modelview and a projection matrix. Now, the mapping from physical space to virtual world space is typically also expressed as a matrix, meaning that this model really just adds yet another modelview matrix. And since the product of two matrices is a matrix, it boils down to the same projection pipeline as the standard model. As I mentioned earlier, the model does not introduce any new capabilities, it just makes it easier to think about the existing capabilities.

So the bottom line is that the viewer/screen model makes it simpler to reason about program-controlled navigation, completely removes the need for an explicit camera model and the extra work required to keep it consistent with the display environment, and — if the display environment was measured properly — automatically leads to distortion-free and seamless images even across multiple screens, and to always correct and eye strain-free stereo displays.

Although this model stems from the immersive environment world, applying it in the desktop realm has immediate practical benefits. For one, it supports proper stereo without extra work from the application developer; additionally, it supports flexible multi-display configurations where users can put their displays however they like, and get correct and seamless images without special application support. It even provides correct desktop head-tracking for free. Sounds like a win-win to me.