I know I’m several years late to the party talking about the recent 3D movie renaissance, but bear with me. I want to talk not about 3D movies, but about their influence on the VR field, good and bad.

First, the good. It’s impossible to deny the huge impact 3D movies have had on VR, simply by commodifying 3D display hardware. I’m going to go out on a limb and say that without Avatar, you wouldn’t be able to go into an electronics store and pick up a 70″ 3D TV for $2100. And without that crucial component, we would not be able to build low-cost fully-immersive 3D display systems for $7000. And we wouldn’t have neat toys like Sony’s HMZ-T1 or the upcoming Oculus Rift either — although the latter is designed for gaming from the ground up, I don’t think the Kickstarter would have taken off if 3D movies weren’t a thing right now.

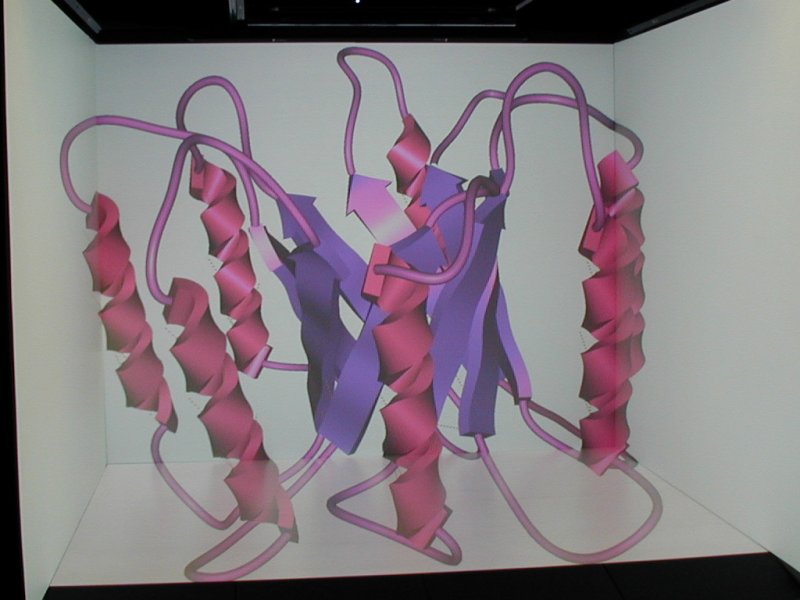

And the effect goes beyond simply making real VR cheaper. It is that now real VR is affordable for a much larger segment of people. $7000 is still a bit much to spend for home entertainment, but it’s inside the equipment budget for many scientists. And those are my target audience. We are not selling low-cost VR systems per se, but we’re giving away the designs to build them, and the software to run them. And we’ve “sold” dozens of them, primarily to scientists who work with 3D data that is too complex to meaningfully analyze with desktop 3D visualization, but who don’t have the budget to build “professional” systems. Now, dozens is absolutely zilch in mainstream terms, but for our niche it’s a big deal, and it’s just taking off. We’re even getting them into high schools now. And we’re not the only ones “selling” them.

The end result is that many more people are getting exposed to real immersive 3D display environments, and to the practical benefits that they offer for their daily work. That will benefit us all.

But there are some downsides to the 3D movie renaissance as well, and while those can be addressed, we first need to be aware of them. For one, while 3D movies are definitely in the public conscience, I found that nobody is exactly completely bonkers about them. Roger Ebert is an extreme example (I think that Mr. Ebert is wrong in the sense that he claims 3D does not work in principle, whereas I think 3D does not work in many concrete implementations seen in theaters right now, but that’s a topic for another post), but the majority of people I speak to are decidedly “meh” about 3D movies. They say “3D doesn’t work for me” or “I get headaches” or “I get dizzy” etc.

Now that is a problem for VR as a whole, because there is no distinction in the public mind between 3D movies and real immersive 3D graphics. Meaning that people think that VR doesn’t work. But it does. I just did a quick guesstimate, and in the seven years we’ve had our CAVE, I’ve probably brought 1000 people through there, from every segment of the population. It has worked for every single one of them. How do I know? Everyone who enters the CAVE goes through the training course — a beach ball-sized globe hanging in the middle of the CAVE, shown in this video:

(Oh boy, just looking at this six-year-old video, the user interface in Vrui has improved so much. It’s almost embarrassing.)

I ask every single person to step in, touch the globe, and then indicate how big it is. And they all do the same thing: use both hands to make a cradling gesture around a virtual object that’s not actually there. If the 3D effect wouldn’t work for them, they couldn’t do it. QED. Before you ask: I’m aware that a significant percentage of the general population have no stereo vision at all, but immersive 3D graphics works for them as well because it provides motion parallax. I know because one of my best friends has monocular vision, and it works for him. He even co-stars with me in a silly video.

The upshot is that the conversation goes differently now. It used to be that I talk to “VR virgins” about what I do, and they have no pre-conception of 3D, are curious, try the CAVE, and it works for them and they like it. These days, I talk about the CAVE, they immediately say that 3D doesn’t work for them, and they’re very reluctant to try the CAVE. I twist their arms to get them in there nonetheless, and it works for them, and they like it. This is not a problem if I have someone there in person, but it’s a problem when I can’t just stuff the person I’m describing VR to into a VR system, as in, say, when you’re writing a proposal to beg for money. And that’s bad news, big time (but it’s a topic for another post).

There is another interesting change in behavior: let’s say I have a group of people coming in for a tour (yeah, we sometimes get strongarmed into doing those). Used to be, they would come into the CAVE room, and stand around not sure what to expect or what to do. These days, they immediately sit down at the conference table, grab a pair of 3D glasses if they find one, and get ready to be entertained. I then have to tell them that no, that’s not how it works, would they please put the non-head tracked glasses down until later, get up, and get ready to get into the CAVE itself and see it properly? It’s pretty funny, actually.

The other downside is that the use of the word “3D” for movies has watered down that term even more. Now there are:

- “3D graphics” for projected 2D images of 3D scenes, i.e., virtual and real photos or movies, i.e., basically everything anybody has ever done. The end results of 3D graphics are decidedly 2D, but the term was coined to distinguish it from 2D graphics, i.e., pictures of scenes playing in flatland.

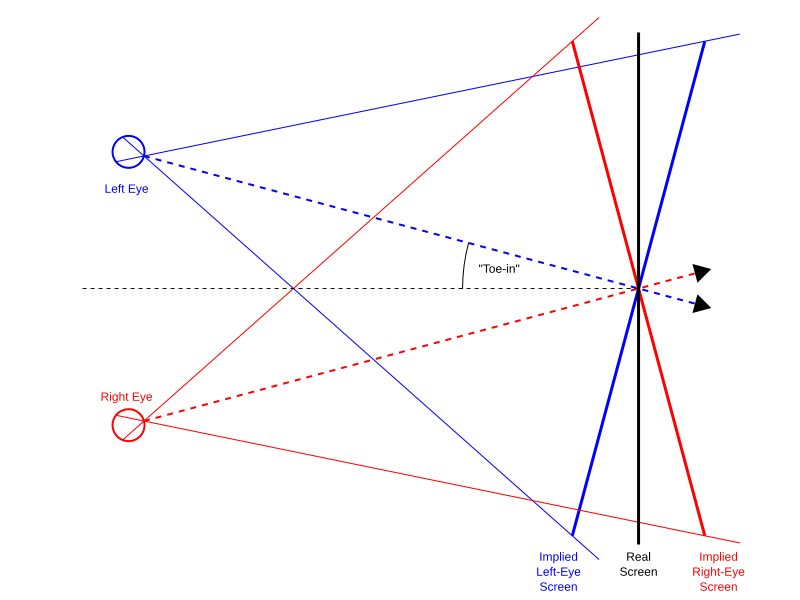

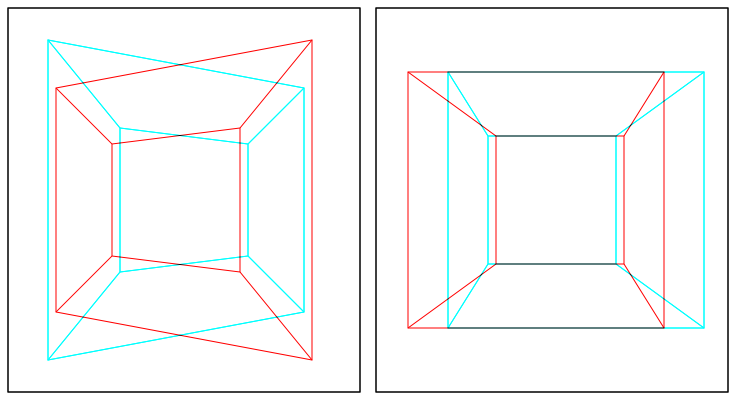

- “3D movies” meaning stereoscopic movies shown on stereoscopic displays. In my opinion, a better term would be “2D plus depth” movies (or they could just go with “stereo movies,” you know), because most directors at this time treat the stereoscopic dimension as a separate entity from the other two dimensions, as something that can be tweaked and played with. And I think that’s one cause of the problem, because they’re messing with people’s brains. And don’t even get me started on “upconverted” 3D movies, oh my.

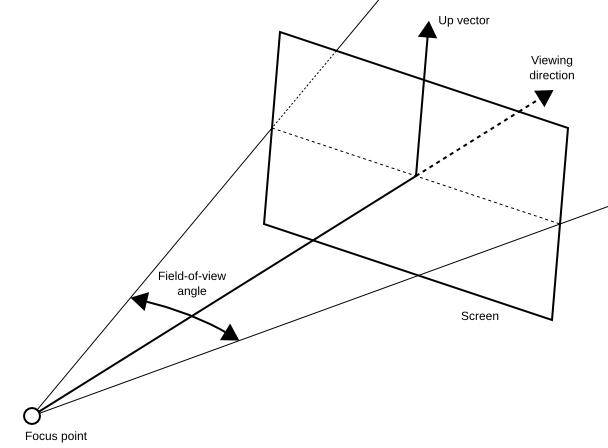

- “3D displays” meaning stereoscopic displays, those used to show 3D movies. They are a necessary component to create 3D images, but not 3D by themselves.

- “3D displays” meaning immersive 3D displays like CAVEs. The distinguishing feature of these is that they show three-dimensional scenes and objects in a way similar enough to how we would perceive the same scenes and objects if they were real that our brains accept the illusion, and allow us to work with them as if they were real — and this last bit is really the main point. The difference between this and “3D movies” cannot be overstated. I would rather call these displays “holographic,” but then I get flak from the “holograms are only holograms if they’re based on lasers and interference” crowd, who are technically correct (and isn’t that the best form of correctness?) because that’s how the word was defined, but it’s wrong because these displays look and feel exactly like holograms — they are free-standing, solid-appearing, touchable virtual objects. After all, “hologram,” loosely translated from Greek, means “shows the whole thing.” And that’s exactly what immersive 3D displays do.

And I probably missed a few. So there’s clearly a confusion of terms, and we need to find ways to distinguish what real immersive 3D graphics does from what 3D movies do, and need to do it in ways that don’t create unrealistic expectations, either. Don’t reference “the Matrix,” try not to mention holodecks (but it’s so tempting!), don’t say it’s an indistinguishable replication of reality (in other words, don’t say “virtual reality,” ha!). Ideally, don’t say anything — show them.

In summary, “3D” is now widely embedded in the public conscience, and the VR community has to deal with it. There are obvious and huge benefits, but there are some downsides as well, and those have to be addressed. They can be addressed — fortunately, immersive 3D graphics are not the same as 3D movies — but it takes care and effort. Time to get started.