I just moved all my Kinects back to my lab after my foray into experimental mixed-reality theater a week ago, and just rebuilt my 3D video capture space / tele-presence site consisting of an Oculus Rift head-mounted display and three Kinects. Now that I have a new extrinsic calibration procedure to align multiple Kinects to each other (more on that soon), and managed to finally get a really nice alignment, I figured it was time to record a short video showing what multi-camera 3D video looks like using current-generation technology (no, I don’t have any Kinects Mark II yet). See Figure 1 for a still from the video, and the whole thing after the jump.

Tag Archives: Head tracking

Gaze-directed Text Entry in VR Using Quikwrite

Text entry in virtual environments is one of those old problems that never seem to get solved. The core issue, of course, is that users in VR either don’t have keyboards (because they are in a CAVE, say), or can’t effectively use the keyboard they do have (because they are wearing an HMD that obstructs their vision). To the latter point: I consider myself a decent touch typist (my main keyboard doesn’t even have key labels), but the moment I put on an HMD, that goes out the window. There’s an interesting research question right there — do typists need to see their keyboards in their peripheral vision to use them, even when they never look at them directly? — but that’s a topic for another post.

Until speech recognition becomes powerful and reliable enough to use as an exclusive method (and even then, imagining having to dictate “for(int i=0;i<numEntries&&entries[i].key!=searchKey;++i)” already gives me a headache), and until brain/computer interfaces are developed and we plug our computers directly into our heads, we’re stuck with other approaches.

Unsurprisingly, the go-to method for developers who don’t want to write a research paper on text entry, but just need text entry in their VR applications right now, and don’t have good middleware to back them up, is a virtual 3D QWERTY keyboard controlled by a 2D or 3D input device (see Figure 1). It’s familiar, straightforward to implement, and it can even be used to enter text.

VR Movies

There has been a lot of discussion about VR movies in the blogosphere and forosphere (just to pick two random examples), and even on Wired, recently, with the tenor being that VR movies will be the killer application for VR. There are even downloadable prototypes and start-up companies.

But will VR movies actually ever work?

This is a tricky question, and we have to be precise. So let’s first define some terms.

When talking about “VR movies,” people are generally referring to live-action movies, i.e., the kind that is captured with physical cameras and shows real people (well, actors, anyway) and environments. But for the sake of this discussion, live-action and pre-rendered computer-generated movies are identical.

We’ll also have to define what we mean by “work.” There are several things that people might expect from “VR movies,” but not everybody might expect the same things. The first big component, probably expected by all, is panoramic view, meaning that a VR movie does not only show a small section of the viewer’s field of view, but the entire sphere surrounding the viewer — primarily so that viewers wearing a head-mounted display can freely look around. Most people refer to this as “360° movies,” but since we’re all thinking 3D now instead of 2D, let’s use the proper 3D term and call them “4π sr movies” (sr: steradian), or “full solid angle movies” if that’s easier.

The second component, at least as important, is “3D,” which is of course a very fuzzy term itself. What “normal” people mean by 3D is that there is some depth to the movie, in other words, that different objects in the movie appear at different distances from the viewer, just like in reality. And here is where expectations will vary widely. Today’s “3D” movies (let’s call them “stereo movies” to be precise) treat depth as an independent dimension from width and height, due to the realities of stereo filming and projection. To present filmed objects at true depth and with undistorted proportions, every single viewer would have to have the same interpupillary distance, all movie screens would have to be the exact same size, and all viewers would have to sit in the same position relative the the screen. This previous post and video talks in great detail about what happens when that’s not the case (it is about head-mounted displays, but the principle and effects are the same). As a result, most viewers today would probably not complain about the depth in a VR movie being off and objects being distorted, but — and it’s a big but — as VR becomes mainstream, and more people experience proper VR, where objects are at 1:1 scale and undistorted, expectations will rise. Let me posit that in the long term, audiences will not accept VR movies with distorted depth.

Game Engines and Positional Head Tracking

Oculus recently presented the “Crystal Cove,” a version of the Rift head-mounted display with built-in optical tracking, which is combined with the existing inertial tracker to provide a full 6-DOF (position and orientation) tracking solution at low latency, and it is rumored that the Crystal Cove will be released as development kit mark 2 after this year’s Game Developers Conference.

This is great news. I’ve been saying for a long time that Oculus cannot afford to drop positional head tracking on developers at the last minute, because it will break several assumptions built into game engines and other VR software (but let’s talk about game engines here). I’m also happy because the Crystal Cove uses precisely the tracking technology that I predicted: active markers (LEDs) on the headset, and an external camera placed at a fixed position in the environment. I am also sad because I didn’t manage to finish my own after-market optical tracking add-on before Oculus demonstrated their new integrated technology, but that’s life.

So why does positional head tracking break existing games? Because for the first time, the virtual camera used to render a game world is no longer under sufficient control of the software. Let’s take a step back. In a standard, desktop, 3D game, the camera is entirely controlled by the software. The software sets it to some position and orientation determined by the game logic, the 3D engine renders the virtual world for that camera setup, and the result is the displayed image.

The Holovision Kickstarter “scam”

Update: Please tear your eyes away from the blue lady and also read this follow-up post. It turns out things are worse than I thought. Now back to your regularly scheduled entertainment.

I somehow missed this when it was hot a few weeks or so ago, but I just found out about an interesting Kickstarter project: HOLOVISION — A Life Size Hologram. Don’t bother clicking the link, the project page has been taken down following a DMCA complaint and might not ever be up again.

Why do I think it’s worth talking about? Because, while there is an actual design for something called Holovision, and that design is theoretically feasible, and possibly even practical, the public’s impression of the product advertised on Kickstarter is decidedly not. The concept imagery associated with the Kickstarter project presents this feasible technology in a way that (intentionally?) taps into people’s misconceptions about holograms (and I’m talking about the “real” kind of holograms, those involving lasers and mirrors and beam splitters). In other words, it might not be a scam per se, and it might even be unintentional, but it is definitely creating a false impression that might lead to very disappointed backers.

Vrui on (in?) Oculus Rift

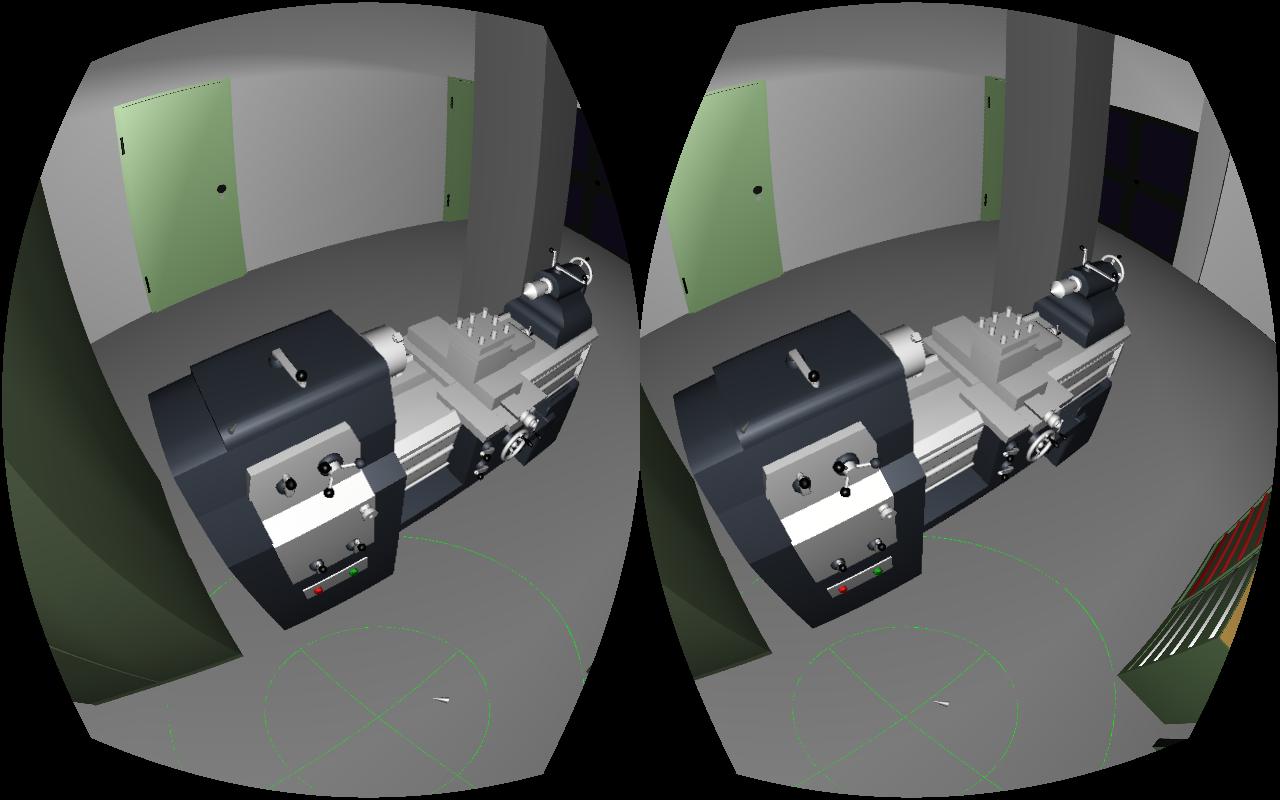

I wrote about my first impressions of the Oculus Rift developer kit back in April, and since then I’ve been working (on and off) on getting it fully and natively supported in Vrui (see Figure 1 for proof that it works). Given that Vrui’s somewhat insane flexibility is a major point of pride for me, what was it that I actually had to create to support the Rift? Turns out, not all that much: a driver for the Rift’s built-in inertial tracking unit and a post-processing filter to correct for the Rift’s lens distortion were all it took (more on that later). So why did it take me this long? For one, I was mostly working on other things and only spent a few hours here and there, but more importantly, the Rift is not just a new head-mounted display (HMD), but a major shift in how HMDs are (or will be) used.

The Kinect 2.0

Details about the next version of Microsoft’s Kinect, to be bundled with the upcoming Xbox One, are slowly emerging. After an initial leak of preliminary specifications on February 20th, 2013, finally some official data are to be had. This article about the upcoming next Kinect-for-Windows mentions “Microsoft’s proprietary Time-of-Flight technology,” which is an entirely different method to sense depth than the current Kinect’s structured light approach. That’s kind of a big deal.

Figure 1: The Xbox One. The box on top, the one with the lens, is probably the Kinect2. They should have gone with a red, glowing lens.

On the road for VR: zSpace developers conference

I went to zCon 2013, the zSpace developers conference, held in the Computer History Museum in Mountain View yesterday and today. As I mentioned in my previous post about the zSpace holographic display, my interest in it is as an alternative to our current line of low-cost holographic displays, which require assembly and careful calibration by the end user before they can be used. The zSpace, on the other hand, is completely plug&play: its optical trackers (more on them below) are integrated into the display screen itself, so they can be calibrated at the factory and work out-of-the-box.

Figure 1: The zSpace holographic display and how it would really look like when seen from this point of view.

So I drove around the bay to get a close look at the zSpace, to determine its viability for my purpose. Bottom line, it will work (with some issues, more on that below). My primary concerns were threefold: head tracking precision and latency, stylus tracking precision and latency, and stereo quality (i.e., amount of crosstalk between the eyes).

Continue reading

First impressions from the Oculus Rift dev kit

My friend Serban got his Oculus Rift dev kit in the mail today, and he called me over to check it out. I will hold back a thorough evaluation until I get the Rift supported natively in my own VR software, so that I can run a direct head-to-head comparison with my other HMDs, and also my screen-based holographic display systems (the head-tracked 3D TVs, and of course the CAVE), using the same applications. Specifically, I will use the Quake ||| Arena viewer to test the level of “presence” provided by the Rift; as I mentioned in my previous post, there are some very specific physiological effects brought out by that old chestnut, and my other HMDs are severely lacking in that department, and I hope that the Rift will push it close to the level of the CAVE. But here are some early impressions.

Continue reading

Behind the scenes: “Virtual Worlds Using Head-mounted Displays”

“Virtual Worlds Using Head-mounted Displays” is the most complex video I’ve made so far, and I figured I should explain how it was done (maybe as a response to people who might say I “cheated”).