“It can’t be comfortable or healthy to stare at a screen a few inches in front of your eyes.”

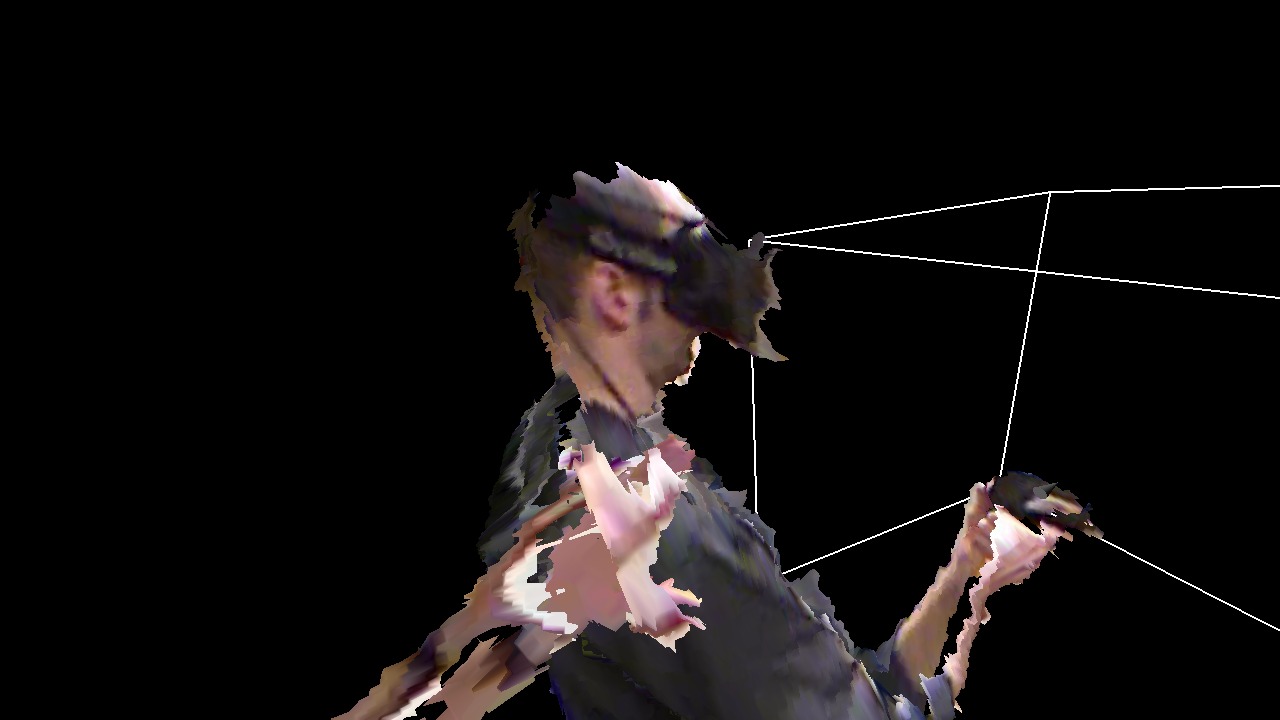

The popularity of Google Cardboard, and the upcoming commercial releases of the Oculus Rift, HTC Vive, and other modern head-mounted displays (HMDs) have raised interest in virtual reality and VR devices in parts of the population who have never been exposed to, or had reason to care about, VR before. Together with the fact that VR, as a medium, is fundamentally different from other media with which it often gets lumped in, such as 3D cinema or 3D TV, this leads to a number of common misunderstandings and frequently-asked questions. Therefore, I am planning to write a series of articles addressing these questions one at a time.

First up: How is it possible to see anything on a screen that is only a few inches in front of one’s face?

Short answer: In HMDs, there are lenses between the screens (or screen halves) and the viewer’s eyes to solve exactly this problem. These lenses project the screens out to a distance where they can be viewed comfortably (for example, in the Oculus Rift CV1, the screens are rumored to be projected to a distance of two meters). This also means that, if you need glasses or contact lenses to clearly see objects several meters away, you will need to wear your glasses or lenses in VR.

Now for the long answer. Continue reading