Boy, is my face red. I just uploaded two videos about intrinsic Kinect calibration to YouTube, and wrote two blog posts about intrinsic and extrinsic calibration, respectively, and now I find out that the factory calibration data I’ve always suspected was stored in the Kinect’s non-volatile RAM has actually been reverse-engineered. With the official Microsoft SDK out that should definitely not have been a surprise. Oh, well, my excuse is I’ve been focusing on other things lately.

So, how good is it? A bit too early to tell, because some bits and pieces are still not understood, but here’s what I know already. As I mentioned in the post on intrinsic calibration, there are several required pieces of calibration data:

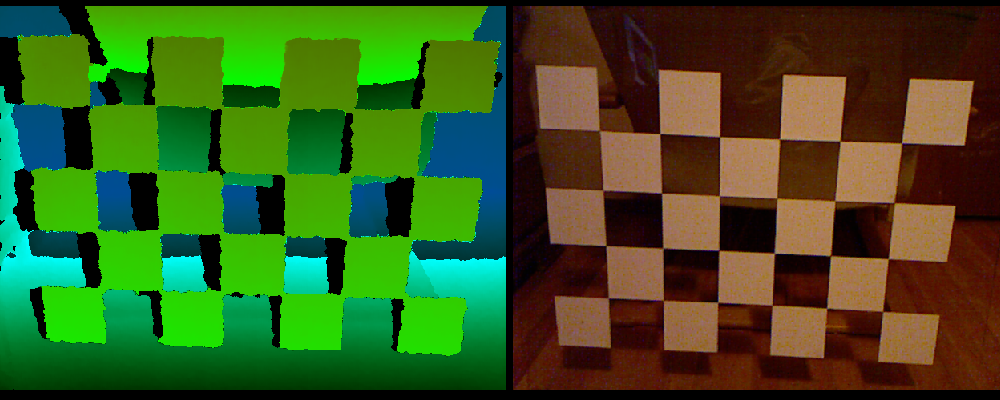

- 2D lens distortion correction for the color camera.

- 2D lens distortion correction for the virtual depth camera.

- Non-linear depth correction (caused by IR camera lens distortion) for the virtual depth camera.

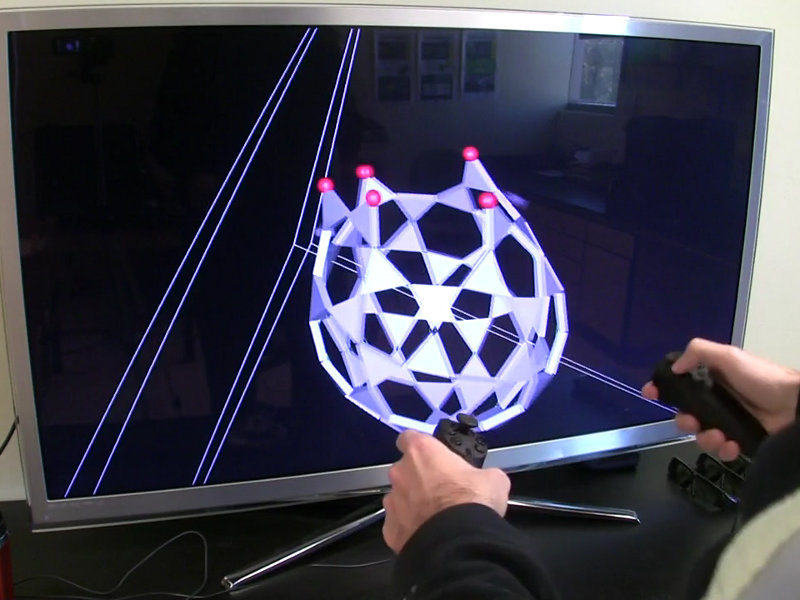

- Conversion formula from (depth-corrected) raw disparity values (what’s in the Kinect’s depth frames) to camera-space Z values.

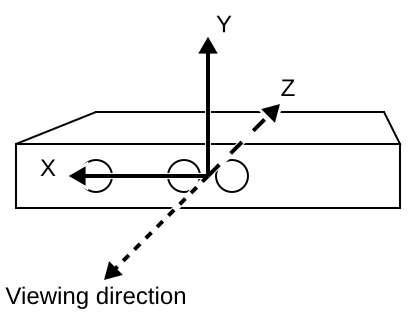

- Unprojection matrix for the virtual depth camera, to map depth pixels out into camera-aligned 3D space.

- Projection matrix to map lens-corrected color pixels onto the unprojected depth image.