In my detailed how-to guide on installing and configuring Vrui for Oculus Rift and Razer Hydra, I did not talk about installing any actual applications (because I hadn’t released Vrui-3.0-compatible packages yet). Those are out now, so here we go.

Kinect

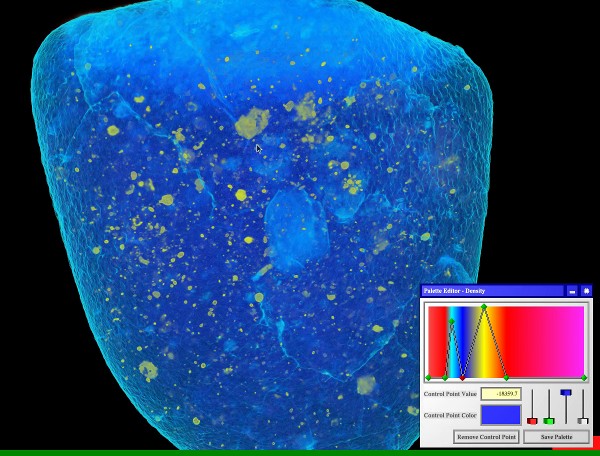

If you happen to own a Kinect for Xbox (Kinect for Windows won’t work), you might want to install the Kinect 3D Video package early on. It can capture 3D (holographic, not stereoscopic) video from one or more Kinects, and either play it back as freely-manipulable virtual holograms, or it can, after calibration, produce in-system overlays of the real world (or both). If you already have Vrui up and running, installation is trivial.